IT organizations are under increasing demand to increase the ability of the business to innovate while controlling and often reducing costs. Legacy modernization is a real opportunity for these goals to be achieved. To attain these goals, the organization needs to take full advantage of emerging advances in platform and software innovations, while leveraging the investment that has been made in the business processes within the legacy environment.To make good choices for a specific roadmap to modernization, the decision makers should work to have a good understanding of what these modernization options are, and how to get there.

Overview of the Modernization Options

There are five primary approaches to legacy modernization:

- Re-architecting to a new environment

- SOA integration and enablement

- Replatforming through re-hosting and automated migration

- Replacement with COTS solutions

- Data Modernization

Other organizations may have different nomenclature for what they call each type of modernization, but any of these options can generally fit into one of these five categories. Each of the options can be carried out in concert with the others, or as a standalone effort. They are not mutually exclusive endeavors. Further, in a large modernization project, multiple approaches are often used for parts of the larger modernization initiative. The right mix of approaches is determined by the business needs driving the modernization, organization's risk tolerance and time constraints, the nature of the source environment and legacy applications. Where the applications no longer meet business needs and require significant changes, re-architecture might be the best way forward. On the other hand, for very large applications that mostly meet the business needs, SOA enablement or re-platforming might be lower risk options.

You will notice that the first thing we talk about in this section—the Legacy Understanding phase—isn't listed as one of the modernization options. It is mentioned at this stage because it is a critical step that is done as a precursor to any option your organization chooses.

Legacy Understanding

Once we have identified our business drivers and the first steps in this process, we must understand what we have before we go ahead and modernize it. Legacy environments are very complex and quite often have little or no current documentation. This introduces a concept of analysis and discovery that is valuable for any modernization technique.

Application Portfolio Analysis (APA)

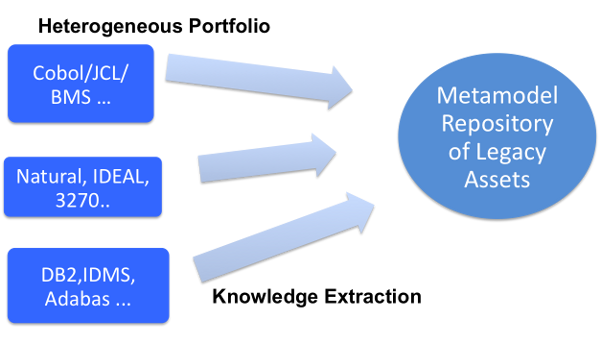

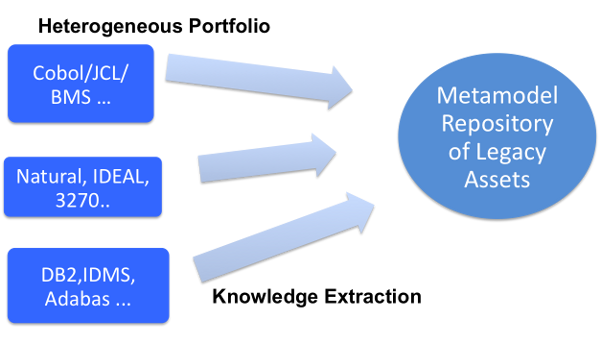

In order to make use of any modernization approach, the first step an organization must take is to carry out an APA of the current applications and their environment. This process has many names. You may hear terms such as Legacy Understanding, Application Re-learn, or Portfolio Understanding. All these activities provide a clear view of the current state of the computing environment. This process equips the organization with the information that it needs to identify the best areas for modernization. For example, this process can reveal process flows, data flows, how screens interact with transactions and programs, program complexity and maintainability metrics and can even generate pseudocode to re-document candidate business rules. Additionally, the physical repositories that are created as a result of the analysis can be used in the next stages of modernization, be it in SOA enablement, re-architecture, or re-platforming. Efforts are currently underway by the Object Management Group (OMG) to create a standard method to exchange this data between applications. The following screenshot shows the Legacy Portfolio Analysis:

APA Macroanalysis

The first form of APA analysis is a very high-level abstract view of the application environment. This level of analytics looks at the application in the context of the overall IT organization. Systems information is collected at a very high level. The key here is to understand which applications exist, how they interact, and what the identified value of the desired function is. With this type of analysis, organizations can manage overall modernization strategies and identify key applications that are good candidates for SOA integration, re-architecture, or re-platforming versus a replacement with Commercial Off-the-Shelf (COTS) applications. Data structures, program code, and technical characteristics are not analyzed here.

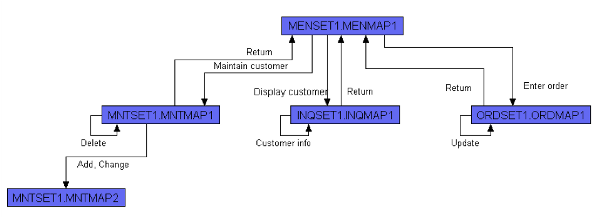

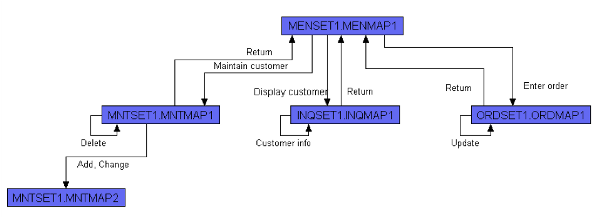

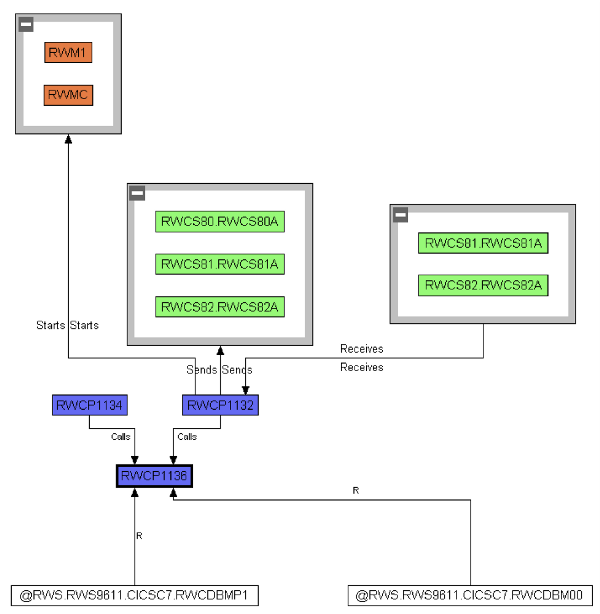

The following macro-level process flow diagram was automatically generated from Relativity Technologies Modernization Workbench tool. Using this, the user can automatically get a view of the screen flows within a COBOL application. This is used to help identify candidate areas for modernization, areas of complexity, transfer of knowledge, or legacy system documentation. The key thing about these types of reports is that they are dynamic and automatically generated.

The previous flow diagram illustrates some interesting points about the system that can be understood quickly by the analyst. Remember, this type of diagram is generated automatically, and can provide instant insight into the system with no prior knowledge. For example, we now have some basic information such as:

- MENSAT1.MENMAP1 is the main driver and is most likely a menu program.

- There are four called programs.

- Two programs have database interfaces.

This is a simplistic view, but if you can imagine hundreds of programs in a visual perspective, we can quickly identify clusters of complexity, define potential subsystems, and do much more, all from an automated tool with visual navigation and powerful cross-referencing capabilities. This type of tool can also help to re-document existing legacy assets.

APA Microanalysis

The second type of portfolio analysis is APA microanalysis. This examines applications at the program level. This level of analysis can be used to understand things like program logic or candidate business rules for enablement, or business rule transformation. This process will also reveal things such as code complexity, data exchange schemas, and specific interaction within a screen flow. These are all critical when considering SOA integration, re-architecture, or a re-platforming project.

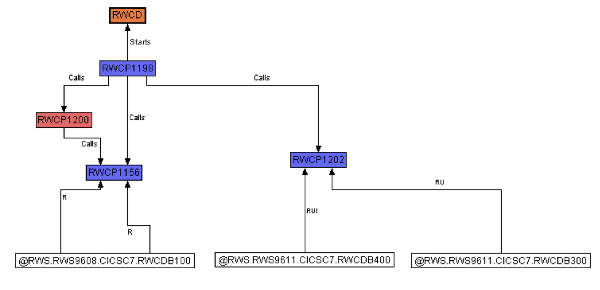

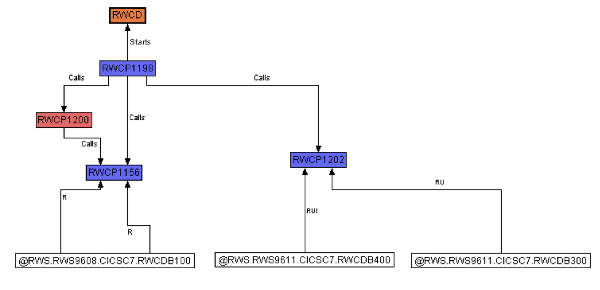

The following are more models generated from the Relativity Modernization Technologies Workbench tool. The first is a COBOL transaction taken from a COBOL process. We are able to take a low-level view of a business rule slice taken from a COBOL program, and understand how this process flows. The particulars of this flow map diagram are not important; rather, this model can be automatically generated and is dynamic based on the current state of the code.

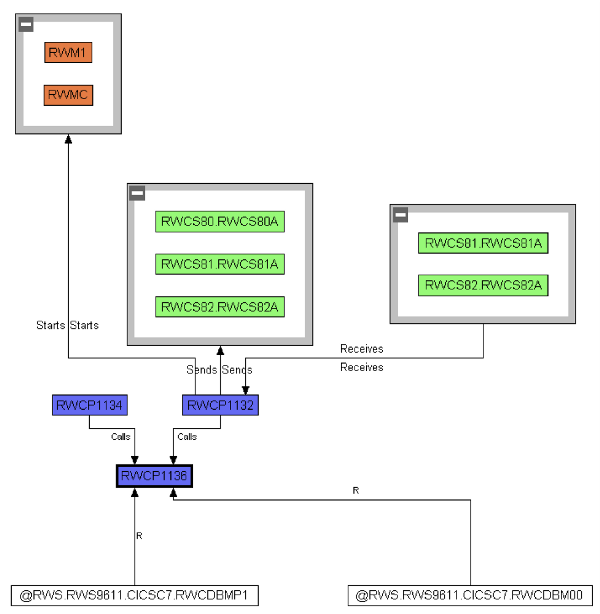

The second model shows how a COBOL program interacts with a screen conversation. In this example, we are able to look at specific paragraphs within a particular program. We can identify specific CICS transaction and understand which paragraphs (or subroutines) are interacting with the database. The models can be used to further refine our drive for a more re-architected system, which helps us to identify business rules and populate a rules engine,

This example is just another example of a COBOL program that interacts with screens—shown in gray, and the paragraphs that execute CICS transactions—shown in white. So with these color coded boxes, we can quickly identify paragraphs, screens, databases, and CICS transactions.

Application Portfolio Management (APM)

APA is only a part of IT approach known as Application Portfolio Management. While APA analysis is critical for any modernization project, APM provides guideposts on how to combine the APA results, business assessment of the applications' strategic value and future needs, and IT infrastructure directions to come up with a long term application portfolio strategy and related technology targets to support it. It is often said that you cannot modernize that which you do not know. With APM, you can effectively manage change within an organization, understand the impact of change, and also manage its compliance.

APM is a constant process, be it part of a modernization project or an organization's portfolio management and change control strategy. All applications are in a constant state of change. During any modernization, things are always in a state of flux. In a modernization project, legacy code is changed, new development is done (often in parallel), and data schemas are changed. When looking into APM tool offerings, consider products that can provide facilities to capture these kinds of changes in information and provide an active repository, rather than a static view. Ideally, these tools must adhere to emerging technical standards, like those being pioneered by the OMG.

Re-Architecturing

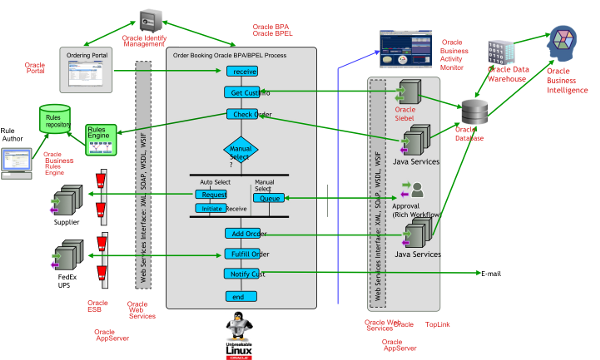

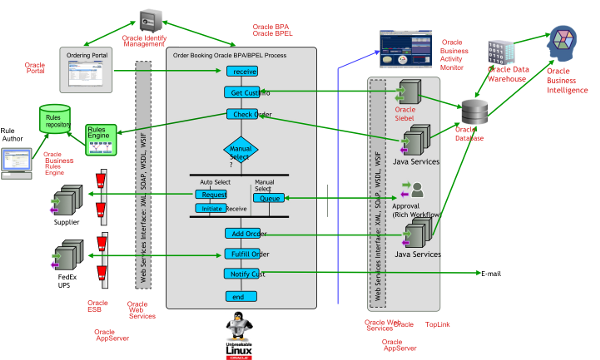

Re-architecting is based on the concept that all legacy applications contain invaluable business logic and data relevant to the business, and these assets should be leveraged in the new system, rather than throwing it all out to rebuild from scratch. Since the new modern IT environment elevates a lot of this logic above the code using declarative models supported by BPM tools, ESBs, Business Rules engines, Data integration and access solutions, some of the original technical code can be replaced by these middleware tools to achieve greater agility. The following screenshot shows an example of a system after re-architecture.

The previous example shows what a system would look like, from a higher level, after re-architecture. We see that this isn't a simple transformation of one code base to another in a one-to-one format. It is also much more than remediation and refactoring of the legacy code to standard java code. It is a system that fully leverages technologies suited for the required task, for example, leveraging Identity Management for security, business rules for core business, and BPEL for process flow.

Thus, re-architecting focuses on recovering and reassembling the process relevant to business from a legacy application, while eliminating the technology-specific code. Here, we want to capture the value of the business process that is independent of the legacy code base, and move it into a different paradigm. Re-architecting is typically used to handle modernizations that involve changes in architecture, such as the introduction of object orientation and process-driven services.

The advantage that re-architecting has over greenfield development is that re-architecting recognizes that there is information in the application code and surrounding artifacts (example, DDLs, COPYBOOKS, user training manuals) that is useful as a source for the re-architecting process, such as application process interaction, data models, and workflow. Re-architecting will usually go outside the source code of the legacy application to incorporate concepts like workflow and new functionality that were never part of the legacy application. However, it also recognized that this legacy application contains key business rules and processes that need to be harvested and brought forward.

Some of the important considerations for maximizing re-use by extracting business rules from legacy applications as part of a re-architecture project include:

- Eliminate dead code, environmental specifics, resolve mutually exclusive logic.

- Identify key input/output data (parameters, screen input, DB and file records, and so on).

- Keep in mind many rules outside of code (for example, screen flow described in a training manual.

- Populate a data dictionary specific to application/industry context.

- Identify and tag rules based on transaction types and key data, policy parameters, key results (output data).

- Isolate rules into tracking repository.

- Combine automation and human review to track relationships, eliminate redundancies, classify and consolidate, add annotation.

A parallel method of extracting knowledge from legacy applications uses modeling techniques, often based on UML. This method attempts to mine UML artifacts from the application code and related materials, and then create full-fledged models representing the complete application. Key considerations for mining models include:

Unlock access to the largest independent learning library in Tech for FREE!

Get unlimited access to 7500+ expert-authored eBooks and video courses covering every tech area you can think of.

Renews at $19.99/month. Cancel anytime

- Convenient code representation helps to quickly filter out technical details.

- Allow user-selected artifacts to be quickly represented in UML entities.

- Allow user to add relationships and annotate the objects to assemble more complete UML model.

- Use external information if possible to refine use cases (screen flows) and activity diagrams—remember that some actors, flows, and so on may not appear in the code.

- Export to XML-based standard notation to facilitate refinement and forward-re-engineering through UML-based tools.

Modernization with this method leverages the years of investment in the legacy code base, it is much less costly and less risky than starting a new application from ground zero. However, since it does involve change, it does have its risks. As a result, a number of other modernization options have been developed that involve less risk. The next set of modernization option provide a different set of benefits with respect to a fully re-architected SOA environment. The important thing is that these other techniques allow an organization to break the process of reaching the optimal modernization target into a series of phases that lower the overall risk of modernization for an organization.

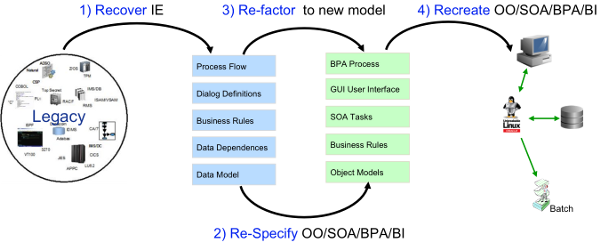

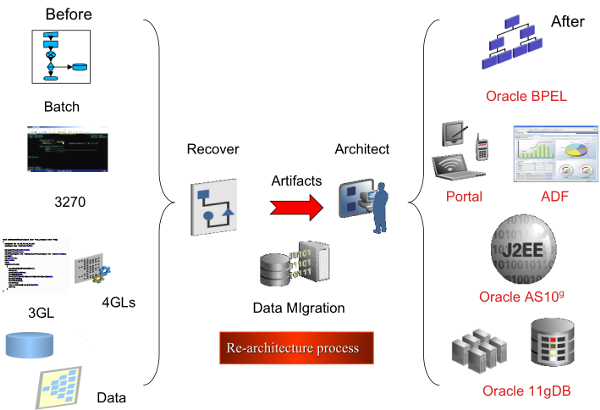

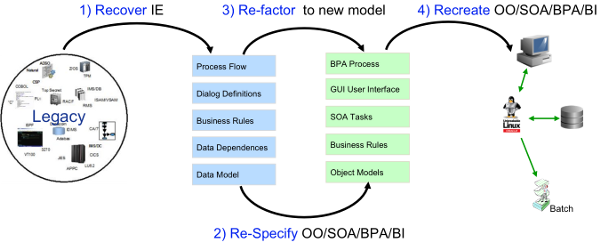

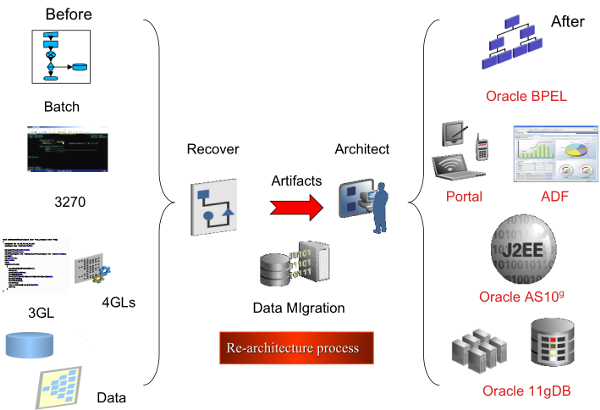

In the following figure, we can see that re-architecture takes a monolithic legacy system and applies technology and process to deliver a highly adaptable modern architecture.

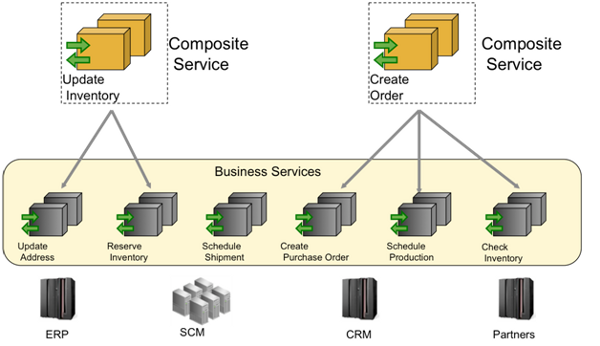

Since SOA integration is the least invasive approach to legacy application modernization, this technique allows legacy components to be used as part of an SOA infrastructure very quickly and with little risk. Further, it is often the first step in the larger modernization process. In this method, the source code remains mostly unchanged (we will talk more about that later) and the application is wrapped using SOA components, thus creating services that can be exposed and registered to an SOA management facility on a new platform, but are implemented via the exiting legacy code. The exposed services can then be re-used and combined with the results of other more invasive modernization techniques such as re-architecting. Using SOA integration, an organization can begin to make use of SOA concepts, including the orchestration of services into business processes, leaving the legacy application intact.

Of course, the appropriate interfaces into the legacy application must exist and the code behind these interfaces must perform useful functions in a manner that can be packaged as services. SOA readiness assessment involves analysis of service granularity, exception handling, transaction integrity and reliability requirements, considerations of response time, message sizes, and scalability, issues of end-to-end messaging security, and requirements for services orchestration and SLA management. Following an assessment, any issues discovered need to be rectified before exposing components as services, and appropriate run-time and lifecycle governance policies created and implemented.

It is important to note that there are three tiers where integration can be done: Data, Screen, and Code. So, each of the tiers, based upon the state and structure of the code, can be extended with this technique. As mentioned before, this is often the first step in modernization.

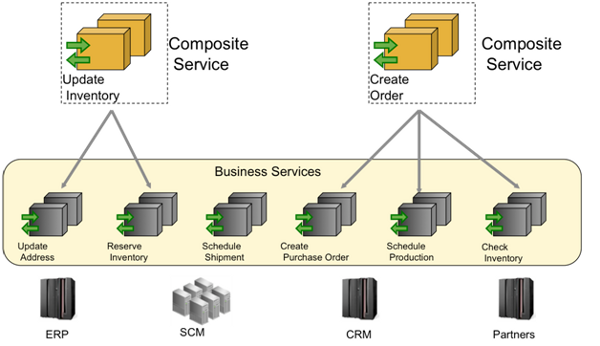

In this example, we can see that the legacy systems still stay on the legacy platform. Here, we isolate and expose this information as a business service using legacy adapters.

The table below lists important considerations in SOA integration and enablement projects.

|

Criteria for identifying well defined

services

- Represent a core enterprise function

re-usable by many client applications

- Present a coarse-grained interface

- Single interaction vs. multi-screen flows

- UI, business logic, data access layers

- Exception handling-returning results

without branching to another screen

Discovering "Services" beyond screen flows

- Conversational vs. sync/async calls

- COMMAREA transactions (re-factored

to use reasonable message size)

Security policies and their enforcement

- RACF vs. LDAP-based or SSO

mechanism

- End-to-end messaging security and

Authentication, Authorization, Audition

|

Services integration and orchestration

- Wrapping and proxying via middle-tier

gate-way vs. mainframe-based services

- Who's responsible for input validation?

- Orchestrating "composite" MF services

- Supporting bidirectional integration

Quality of Service (QoS) requirements

- Response time, throughput, scalability

- End-to-end monitoring and SLA

management

- Transaction integrity and global

transaction coordination

- End-to-end monitoring and tracing

Services lifecycle governance

- Ownership of service interfaces and

change control process

- Service discovery (repository, tools)

|

United States

United States

Great Britain

Great Britain

India

India

Germany

Germany

France

France

Canada

Canada

Russia

Russia

Spain

Spain

Brazil

Brazil

Australia

Australia

Singapore

Singapore

Canary Islands

Canary Islands

Hungary

Hungary

Ukraine

Ukraine

Luxembourg

Luxembourg

Estonia

Estonia

Lithuania

Lithuania

South Korea

South Korea

Turkey

Turkey

Switzerland

Switzerland

Colombia

Colombia

Taiwan

Taiwan

Chile

Chile

Norway

Norway

Ecuador

Ecuador

Indonesia

Indonesia

New Zealand

New Zealand

Cyprus

Cyprus

Denmark

Denmark

Finland

Finland

Poland

Poland

Malta

Malta

Czechia

Czechia

Austria

Austria

Sweden

Sweden

Italy

Italy

Egypt

Egypt

Belgium

Belgium

Portugal

Portugal

Slovenia

Slovenia

Ireland

Ireland

Romania

Romania

Greece

Greece

Argentina

Argentina

Netherlands

Netherlands

Bulgaria

Bulgaria

Latvia

Latvia

South Africa

South Africa

Malaysia

Malaysia

Japan

Japan

Slovakia

Slovakia

Philippines

Philippines

Mexico

Mexico

Thailand

Thailand