This Monday, Tesla’s “Autonomy Investor Day” kickstarted at its headquarters in Palo Alto. At this invitation-only event, Elon Musk, the CEO of Tesla, with his fellow executives, talked about its new microchip, robotaxis hitting the road by next year, and more.

Here are some of the key takeaways from the event:

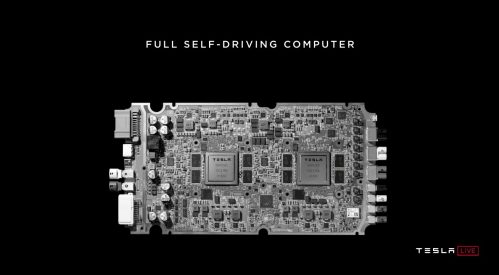

The Full Self-Driving (FSD) computer

Tesla shared details of its new custom chip, Full Self-Driving (FSD) computer, previously known as Autopilot Hardware 3.0. Elon Musk, the CEO of Tesla, believes that the FSD computer is “the best chip in the world…objectively.”

Tesla replaced Nvidia’s Autopilot 2.5 computer with its own custom chip for Model S and Model X about a month ago. For Model 3 vehicles this change happened about 10 days ago. Musk said, “All cars being produced all have the hardware necessary — computer and otherwise — for full self-driving. All you need to do is improve the software.”

FSD is a high-performance, special-purpose chip built by Samsung with main focus on autonomy and safety. It comes with a factor of 21 improvements in frame per second processing as compared to the previous generation Tesla Autopilot hardware, which was powered by Nvidia hardware. The company further shared that retrofits will be offered to current Tesla owners who bought the ‘Full Self-Driving package’ in the next few months.

Here’s the new Tesla FSD computer:

Credits: Tesla

Musk shared that the company has already started working on a next-generation chip. The design of FSD was completed within 2 years of time and Tesla is now about halfway through the design of the next-generation chip.

Musk’s claims of building the best chip can be taken with a pinch of salt as it could surely upset some engineers from Nvidia, Mobileye, and other companies who have been in the chip manufacturing market for a long time. Nvidia, in a blog post, along with applauding Tesla for its FSD computer, highlighted “few inaccuracies” in the comparison made by Musk during the event:

“It’s not useful to compare the performance of Tesla’s two-chip Full Self Driving computer against NVIDIA’s single-chip driver assistance system. Tesla’s two-chip FSD computer at 144 TOPs would compare against the NVIDIA DRIVE AGX Pegasus computer which runs at 320 TOPS for AI perception, localization and path planning.”

While pointing out the “inaccuracies”, Nvidia did miss out the key point here: the power consumption. “Having a system that can do 160 TOPS means little if it uses 500 watts while tesla’s 144 TOPS system uses 72 watts,” a Redditor said.

Robotaxis will hit the roads in 2020

Musk shared that within the next year or so we will see Tesla’s robotaxis coming into the ride-hailing market giving competition to Uber and Lyft. Musk made a bold claim saying that though, similar to other ride-hailing services, the robotaxis will allow users to hail a Tesla for a ride, they will not have drivers.

Musk announced, “I feel very confident predicting that there will be autonomous robotaxis from Tesla next year — not in all jurisdictions because we won’t have regulatory approval everywhere.” He did not share many details on what regulations he was talking about.

The service will allow Tesla-owners to add their properly equipped vehicles to Tesla’s own ride-sharing app, following a similar business model as Uber or Airbnb. The company will provide a dedicated number of robotaxis in areas where there are not enough loanable cars.

Musk predicted that the average robotaxi will be able to yield $30,000 in gross profit per car, annually. Of this profit, about 25% to 30% will go to Tesla, therefore, an owner will be able to make $21,000 a year.

Musk’s plans for launching robotaxis next year looks ambitious. Experts and the media are quite skeptical about his plan. The Partners for Automated Vehicle Education (PAVE) industry group tweeted:

Most vehicles available for sale today offer driver assistance features; in all vehicles available for sale today, even those with the most advanced of these aids, the driver must always monitor and be prepared to control the vehicle.

— PAVE Campaign (@PAVECampaign) April 22, 2019

Experts: Tesla’s Plans To Launch a Robotaxi Network Are Nonsense | via @futurism https://t.co/jJjZYK2gqp

— Rob McCargow #CYBERUK19 (@RobMcCargow) April 24, 2019

Musk says “Anyone relying on lidar is doomed”

Musk has been pretty vocal about his dislike towards LIDAR. He calls this technology “a crutch for self-driving cars”. When this topic came up at the event, Musk said:

“Lidar is a fool’s errand. Anyone relying on lidar is doomed. Doomed! [They are] expensive sensors that are unnecessary. It’s like having a whole bunch of expensive appendices. Like, one appendix is bad, well now you have a whole bunch of them, it’s ridiculous, you’ll see.”

LIDAR, which stands for Light Direction and Ranging, is used by Uber, Waymo, Cruise, and many other self-driving vehicles manufacturing companies. LIDAR projects low-intensity, harmless, and invisible laser beams at a target, or in the case of self-driving cars, all around. The reflected pulses are then measured for return time and wavelength to calculate the distance of an object from the sender.

Lidar is capable of producing pretty detailed visualizations of the environment around a self-driving car. However, Tesla believes that this same functionality can be facilitated by cameras. According to Musk, cameras can provide much better resolutions and when combined with the neural net can predict depth very well.

Andrej Karpathy, Tesla’s Senior Director of AI, took to the stage to explain the limitations of Lidar. He said, “In that sense, lidar is really a shortcut. It sidesteps the fundamental problems, the important problem of visual recognition, that is necessary for autonomy. It gives a false sense of progress and is ultimately a crutch. It does give, like, really fast demos!”. Karpathy further added, “You were not shooting lasers out of your eyes to get here.” While true, many felt that the reasoning is completely flawed.

A Redditor in a discussion thread, said, “Musk’s argument that “you drove here using your own two eyes with no lasers coming out of them” is reductive and flawed. It should be obvious to anyone that our eyes are more complex than simple stereo cameras. If the Tesla FSD system can reliably perceive depth at or above the level of the human eye in all conditions, then they have done something truly remarkable. Judging by how Andrej Karpathy deflected the question about how well the system works in snowy conditions, I would assume they have not reached that level.”

Check out the live stream of the autonomy day on Tesla’s official website.

Read Next

Tesla v9 to incorporate neural networks for autopilot

Tesla is building its own AI hardware for self-driving cars

Nvidia Tesla V100 GPUs publicly available in beta on Google Compute Engine and Kubernetes Engine

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)