Last week at the USENIX Annual Technical Conference (ATC) 2019 event, a team of researchers introduced ‘gg’. It is an open-source framework that helps developers execute applications using thousands of parallel threads on a cloud function service to achieve near-interactive completion times.

“In the future, instead of running these tasks on a laptop, or keeping a warm cluster running in the cloud, users might push a button that spawns 10,000 parallel cloud functions to execute a large job in a few seconds from start. gg is designed to make this practical and easy,” the paper reads.

At USENIX ATC, leading systems researchers present their cutting-edge systems research. It also gives researchers to gain insight into topics like virtualization, network management and troubleshooting, cloud and edge computing, security, privacy, and more.

Why is the gg framework introduced

Cloud functions, better known as, serverless computing, provide developers finer granularity and lower latency. Though they were introduced for event handling and invoking web microservices, their granularity and scalability make them a good candidate for creating something called a “burstable supercomputer-on-demand”.

These systems are capable of launching a burst-parallel swarm of thousands of cloud functions, all working on the same job. The goal here is to provide results to an interactive user much faster than their own computer or by booting a cold cluster and is cheaper than maintaining a warm cluster for occasional tasks.

However, building applications on swarms of cloud functions pose various challenges. The paper lists some of them:

- Workers are stateless and may need to download large amounts of code and data on startup

- Workers have limited runtime before they are killed

- On-worker storage is limited but much faster than off-worker storage

- The number of available cloud workers depends on the provider’s overall load and can’t be known precisely upfront

- Worker failures occur when running at large scale

- Libraries and dependencies differ in a cloud function compared with a local machine

- Latency to the cloud makes roundtrips costly

How gg works

Previously, researchers have addressed some of these challenges. The gg framework aims to address these principal challenges faced by burst-parallel cloud-functions applications. With gg, developers and users can build applications that burst from zero to thousands of parallel threads to achieve low latency for everyday tasks.

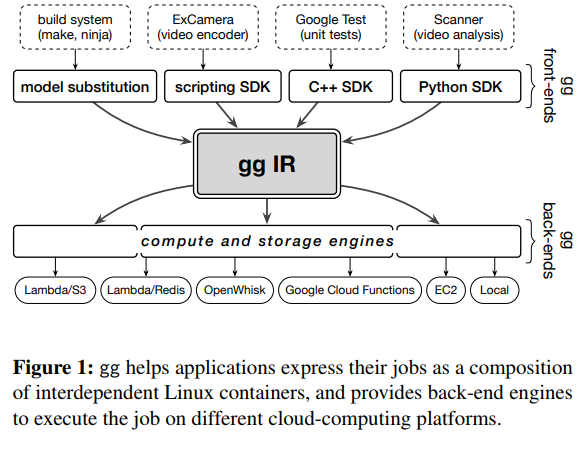

The following diagram shows its composition:

Source: From Laptop to Lambda: Outsourcing Everyday Jobs to Thousands of Transient Functional Containers

The gg framework enables you to build applications on an abstraction of transient, functional containers that are also known as thunks. Applications can express their jobs in terms of interrelated thunks or Linux containers and then schedule, instantiate, and execute those thunks on a cloud-functions service.

This framework is capable of containerizing and executing existing programs like software compilation, unit tests, and video encoding with the help of short-lived cloud functions. In some cases, this can give substantial gains in terms of performance. It can also be inexpensive than keeping a comparable cluster running continuously depending on the frequency of the task.

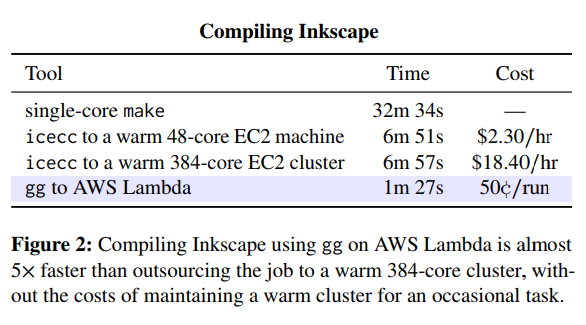

The functional approach and fine-grained dependency management of gg give significant performance benefits when compiling large programs from a cold start. Here’s a table showing a summary of the results for compiling Inkscape, an open-source software:

Source: From Laptop to Lambda: Outsourcing Everyday Jobs to Thousands of Transient Functional Containers

When running “cold” on AWS Lambda, gg was nearly 5x faster than an existing icecc system, running on a 48-core or 384-core cluster of running VMs.

To know more in detail, read the paper: From Laptop to Lambda: Outsourcing Everyday Jobs to Thousands of Transient Functional Containers. You can also check out gg’s code on GitHub.

Also, watch the talk in which Keith Winstein, an assistant professor of Computer Science at Stanford University, explains the purpose of GG and demonstrates how it exactly works:

Read Next

Cloud computing trends in 2019

Cloudflare’s Workers enable containerless cloud computing powered by V8 Isolates and WebAssembly

Serverless Computing 101

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)