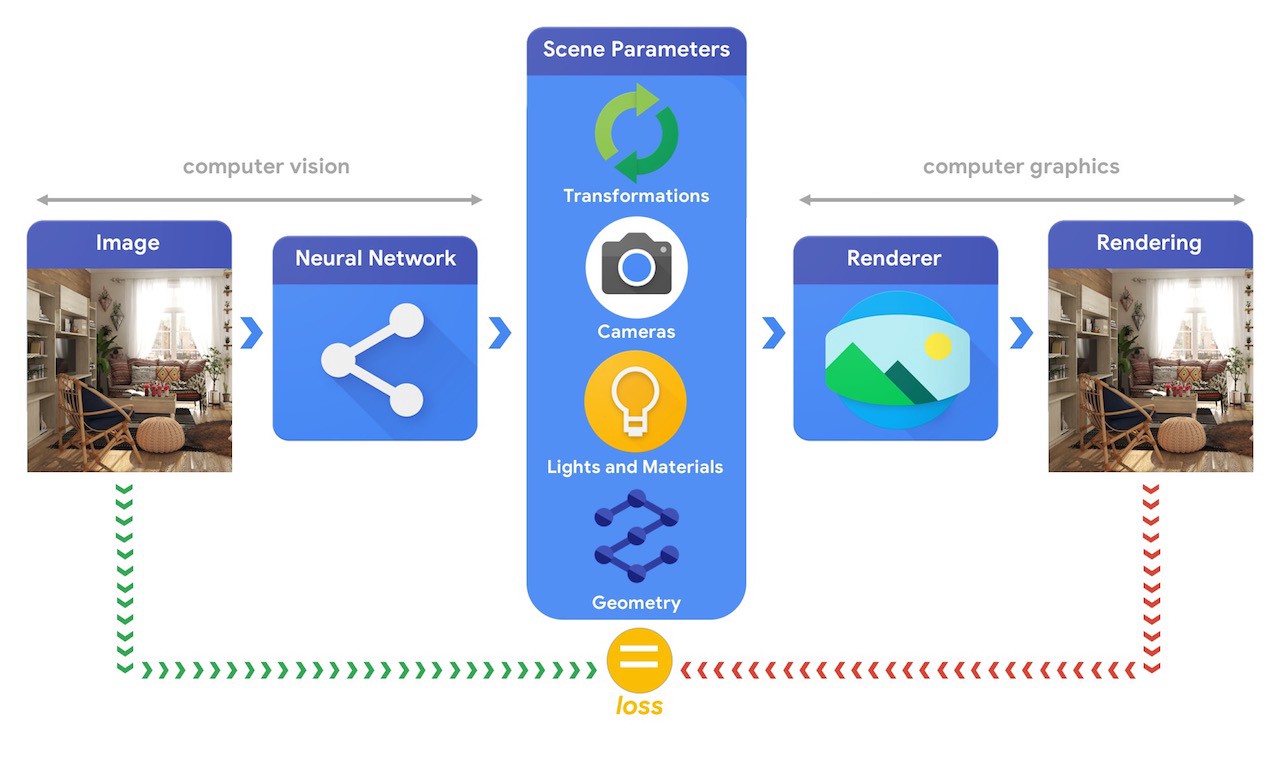

Yesterday, the team at TensorFlow introduced TensorFlow Graphics. A computer graphics pipeline requires 3D objects and their positioning in the scene, and a description of the material they are made of, lights and a camera. This scene description then gets interpreted by a renderer for generating a synthetic rendering.

In contrast, a computer vision system starts from an image and then tries to infer the parameters of the scene. This also allows the prediction of which objects are in the scene, what materials they are made of, and their three-dimensional position and orientation.

Developers usually require large quantities of data to train machine learning systems that are capable of solving these complex 3D vision tasks. As labelling data is a bit expensive and complex process, so it is better to have mechanisms to design machine learning models. They can easily comprehend the three dimensional world while being trained without much supervision. By combining computer vision and computer graphics techniques we get to leverage the vast amounts of unlabelled data.

For instance, this can be achieved with the help of analysis by synthesis where the vision system extracts the scene parameters and the graphics system then renders back an image based on them. In this case, if the rendering matches the original image, which means the vision system has accurately extracted the scene parameters. Also, we can see that in this particular setup, computer vision and computer graphics go hand-in-hand. This also forms a single machine learning system which is similar to an autoencoder that can be trained in a self-supervised manner.

Image source: TensorFlow

We will now explore some of the functionalities of TensorFlow Graphics.

Object transformations

Object transformations are responsible for controlling the position of objects in space. The axis-angle formalism is used for rotating a cube and the rotation axis points up to form a positive which leads the cube to rotate counterclockwise. This task is also at the core of many applications that include robots that focus on interacting with their environment.

Modelling cameras

Camera models play a crucial role in computer vision as they influence the appearance of three-dimensional objects projected onto the image plane. For more details about camera models and a concrete example of how to use them in TensorFlow, check out the Colab example.

Material models

Material models are used to define how light interacts with objects to give them their unique appearance. Some materials like plaster and mirrors usually reflect light uniformly in all directions. Users can now play with the parameters of the material and the light to develop a good sense of how they interact.

TensorBoard 3d

TensorFlow Graphics features a TensorBoard plugin to interactively visualize 3d meshes and point clouds. Through which visual debugging is also possible that helps to assess whether an experiment is going in the right direction.

To know more about this news, check out the post on Medium.

Read Next

TensorFlow 1.13.0-rc2 releases!

TensorFlow 1.13.0-rc0 releases!

TensorFlow.js: Architecture and applications