The essence of Augmented Reality is that your device recognizes objects in the real world and renders the computer graphics registered to the same 3D space, providing the illusion that the virtual objects are in the same physical space with you.

Since augmented reality was first invented decades ago, the types of targets the software can recognize has progressed from very simple markers for images and natural feature tracking to full spatial map meshes. There are many AR development toolkits available; some of them are more capable than others of supporting a range of targets.

The following is a survey of various Augmented Reality target types. We will go into more detail in later chapters, as we use different targets in different projects.

Marker

The most basic target is a simple marker with a wide border. The advantage of marker targets is they’re readily recognized by the software with very little processing overhead and minimize the risk of the app not working, for example, due to inconsistent ambient lighting or other environmental conditions. The following is the Hiro marker used in example projects in ARToolkit:

Coded Markers

Taking simple markers to the next level, areas within the border can be reserved for 2D barcode patterns. This way, a single family of markers can be reused to pop up many different virtual objects by changing the encoded pattern. For example, a children’s book may have an AR pop up on each page, using the same marker shape, but the bar code directs the app to show only the objects relevant to that page in the book.

The following is a set of very simple coded markers from ARToolkit:

Vuforia includes a powerful marker system called VuMark that makes it very easy to create branded markers, as illustrated in the following image. As you can see, while the marker styles vary for specific marketing purposes, they share common characteristics, including a reserved area within an outer border for the 2D code:

Images

The ability to recognize and track arbitrary images is a tremendous boost to AR applications as it avoids the requirement of creating and distributing custom markers paired with specific apps. Image tracking falls into the category of natural feature tracking (NFT). There are characteristics that make a good target image, including having a well-defined border (preferably eight percent of the image width), irregular asymmetrical patterns, and good contrast. When an image is incorporated in your AR app, it’s first analyzed and a feature map (2D node mesh) is stored and used to match real-world image captures, say, in frames of video from your phone.

Multi-targets

It is worth noting that apps may be set up to see not just one marker in view but multiple markers. With multitargets, you can have virtual objects pop up for each marker in the scene simultaneously.

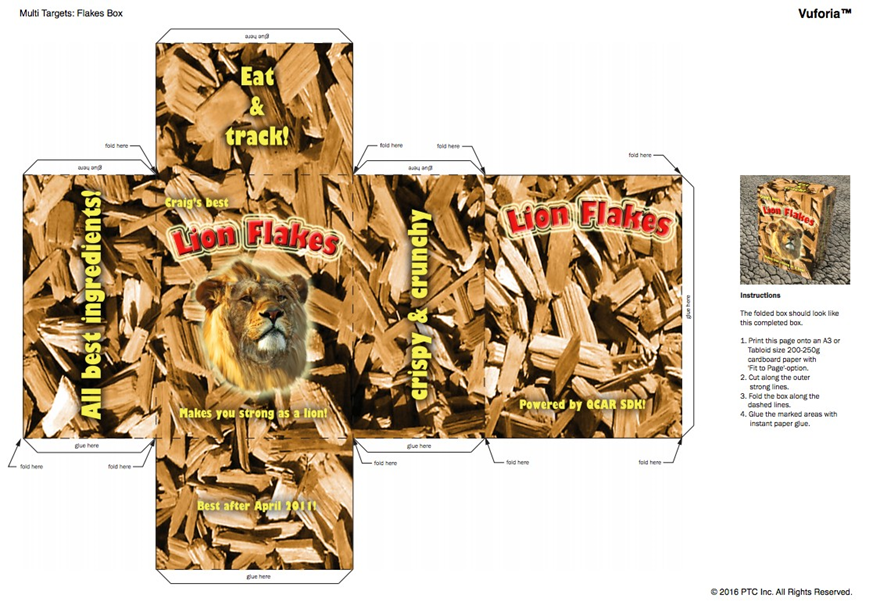

Similarly, markers can be printed and folded or pasted on geometric objects, such as product labels or toys. The following is an example cereal box target:

Text recognition

If a marker can include a 2D bar code, then why not just read text? Some AR SDKs allow you to configure your app (train) to read text in specified fonts. Vuforia goes further with a word list library and the ability to add your own words.

Simple shapes

Your AR app can be configured to recognize basic shapes such as a cuboid or cylinder with specific relative dimensions. Its not just the shape but its measurements that may distinguish one target from another: Rubik’s Cube versus a shoe box, for example. A cuboid may have width, height, and length. A cylinder may have a length and different top and bottom diameters (for example, a cone). In Vuforia’s implementation of basic shapes, the texture patterns on the shaped object are not considered, just anything with a similar shape will match. But when you point your app to a real-world object with that shape, it should have enough textured surface for good edge detection; a solid white cube would not be easily recognized.

Object recognition

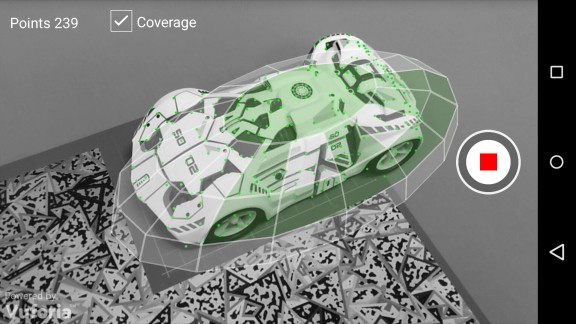

The ability to recognize and track complex 3D objects is similar but goes beyond 2D image recognition. While planar images are appropriate for flat surfaces, books or simple product packaging, you may need object recognition for toys or consumer products without their packaging. Vuforia, for example, offers Vuforia Object Scanner to create object data files that can be used in your app for targets. The following is an example of a toy car being scanned by Vuforia Object Scanner:

Spatial maps

Earlier, we introduced spatial maps and dynamic spatial location via SLAM. SDKs that support spatial maps may implement their own solutions and/or expose access to a device’s own support. For example, the HoloLens SDK Unity package supports its native spatial maps, of course. Vuforia’s spatial maps (called Smart Terrain) does not use depth sensing like HoloLens; rather, it uses visible light camera to construct the environment mesh using photogrammetry. Apple ARKit and Google ARCore also map your environment using the camera video fused with other sensor data.

Geolocation

A bit of an outlier, but worth mentioning, AR apps can also use just the device’s GPS sensor to identify its location in the environment and use that information to annotate what is in view. I use the word annotate because GPS tracking is not as accurate as any of the techniques we have mentioned, so it wouldn’t work for close-up views of objects. But it can work just fine, say, standing atop a mountain and holding your phone up to see the names of other peaks within the view or walking down a street to look up Yelp! reviews of restaurants within range. You can even use it for locating and capturing Pokémon.

[box type=”note” align=”” class=”” width=””]You read an excerpt from the book, Augmented Reality for Developers, by Jonathan Linowes, and Krystian Babilinski. To learn how to use these targets and to build a variety of AR apps, check the book now![/box]

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)