Amnesty International has partnered with Element AI to release a Troll Patrol report on the online abuse against women on Twitter. This finding was a part of their Troll patrol project which invites human rights researchers, technical experts, and online volunteers to build a crowd-sourced dataset of online abuse against women.

6,500+ volunteers signed up. Sorted through 228,000 tweets sent to 778 women politicians & journalists in UK & USA in 2017.

What did they find? 1.1 million toxic tweets were sent to women in the study across the year—one every 30 seconds on average. https://t.co/GUEO22C95j

— Amnesty International (@amnesty) December 18, 2018

Abuse of women on social media websites has been rising at an unprecedented rate. Social media websites have a responsibility to respect human rights and to ensure that women using the platform are able to express themselves freely and without fear. However, this has not been the case with Twitter and Amnesty has unearthed certain discoveries.

Amnesty’s methodology was powered by machine learning

Amnesty and Element AI surveyed 778 journalists and politicians from the UK and US throughout 2017 and then use machine learning techniques to qualitatively analyze abuse against women.

- The first process was to design large, unbiased dataset of tweets mentioning 778 women politicians and journalists from the UK and US.

- Next, over 6,500 volunteers (aged between 18 to 70 years old and from over 150 countries) analyzed 288,000 unique tweets to create a labeled dataset of abusive or problematic content. This was based on simple questions such as if the tweets were abusive or problematic, and if so, whether they revealed misogynistic, homophobic or racist abuse or other types of violence.

- Three experts also categorized a sample of 1,000 tweets to assess the quality of the tweets labeled by digital volunteers.

- Element AI used data science specifically using a subset of the Decoders and experts’ categorization of the tweets, to extrapolate the abuse analysis.

Key findings from the report

Per the findings of the Troll Patrol report,

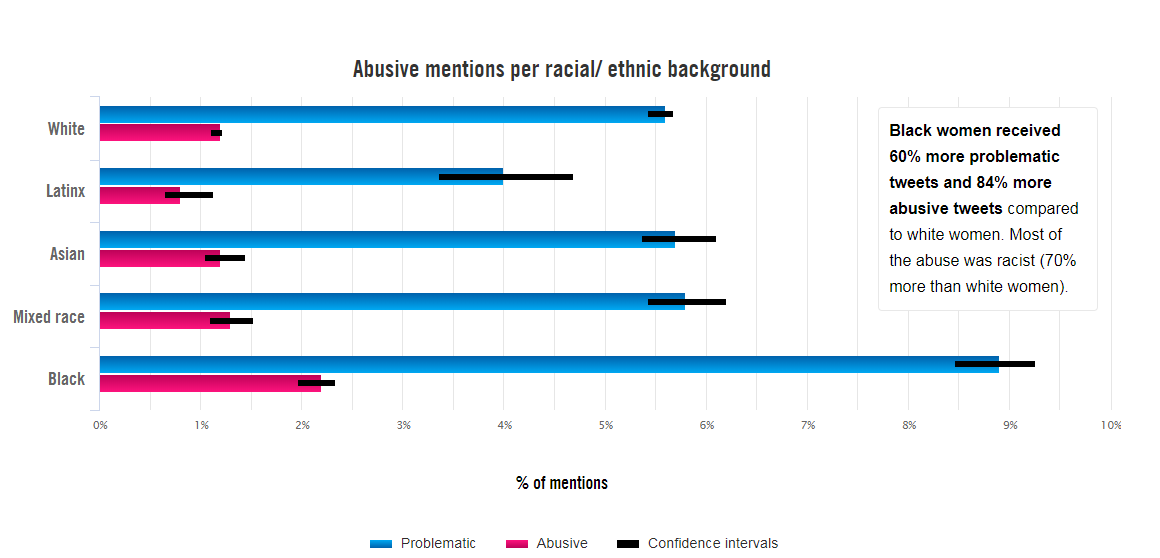

Source: Amnesty

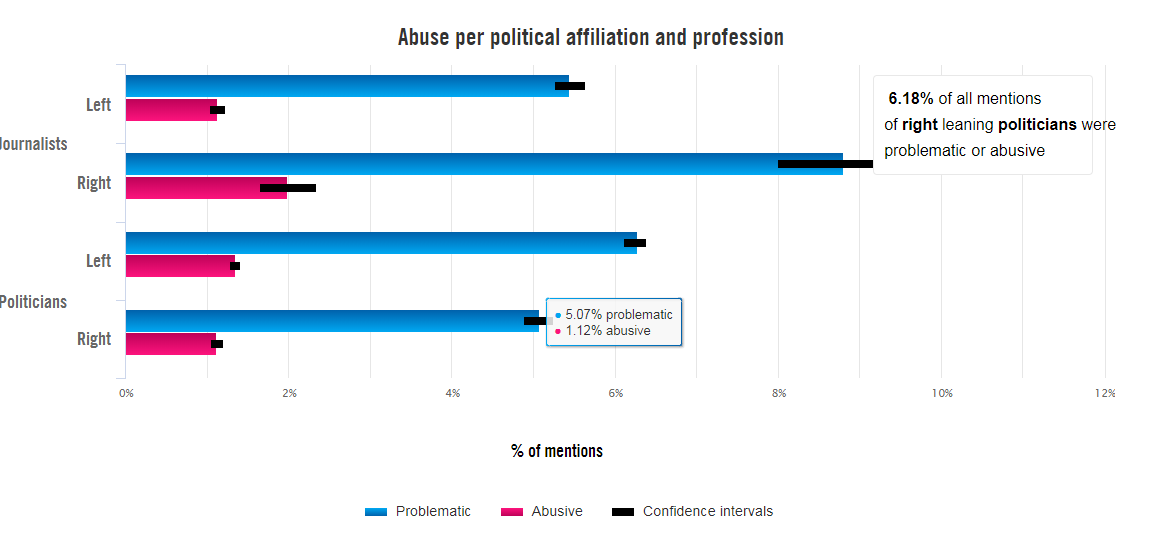

Source: Amnesty |

What does this mean for people in tech

Social media organizations are repeatedly failing in their responsibility to protect women’s rights online. They fall short of adequately investigating and responding to reports of violence and abuse in a transparent manner which leads many women to silence or censor themselves on the platform. Such abuses also hinder the freedom of expression online and also undermines women’s mobilization for equality and justice, particularly those groups who already face discrimination and marginalization.

What can tech platforms do?

One of the recommendations of the report is that social media platforms should publicly share comprehensive and meaningful information about reports of violence and abuse against women, as well as other groups, on their platforms. They should also talk in detail about how they are responding to it.

Although Twitter and other platforms are using machine learning for content moderation and flagging, they should be transparent about the algorithms they use. They should publish information about training data, methodologies, moderation policies and technical trade-offs (such as between greater precision or recall) for public scrutiny. Machine learning automation should ideally be part of a larger content moderation system characterized by human judgment, greater transparency, rights of appeal and other safeguards.

Amnesty in collaboration with Element AI also developed a machine learning model to better understand the potential and risks of using machine learning in content moderation systems. This model was able to achieve results comparable to their digital volunteers at predicting abuse, although it is ‘far from perfect still’, Amnesty notes. It achieves about a 50% accuracy level when compared to the judgment of experts. It was able to correctly identify 2 in every 14 tweets as abusive or problematic in comparison to experts who identified 1 in every 14 tweets as abusive or problematic.

“Troll Patrol isn’t about policing Twitter or forcing it to remove content. We are asking it to be more transparent, and we hope that the findings from Troll Patrol will compel it to make that change. Crucially, Twitter must start being transparent about how exactly they are using machine learning to detect abuse, and publish technical information about the algorithms they rely on”. said Milena Marin senior advisor for tactical research at Amnesty International.

Read more: The full list of Amnesty’s recommendations to Twitter.

People on Twitter (the irony) are shocked at the release of Amnesty’s report and #ToxicTwitter is trending.

Look at these figures:

1.1 million abusive or problematic tweets sent to 778 women journalists and politicians in 2017Seriously – this is wrong and #ToxicTwitter – one tweet every 30 seconds – go read the report. https://t.co/4F9bE9p2UJ

— Gregory Storer (@gregorystorer) December 18, 2018

The figures are even starker for black women: 84% more likely than white women to be abused on #ToxicTwitter. Shocking findings from a timely report. https://t.co/y0rVeWTyXL @WritersofColour

— Shiromi (@blimundaseyes) December 18, 2018

Rape threats, death threats, racist & sexist abuse silence women on @Twitter – and it’s still what’s happening on your #ToxicTwitter, @jack.

— Michael Link (@MikeWLink) December 17, 2018

Female politicians and journalists abused every 30 seconds on Twitter via @FT (cc @kayburley)

https://t.co/ayC6arcbEq— Beth Rigby (@BethRigby) December 18, 2018

Check out the full Troll Patrol report on Amnesty. Also, check out their machine learning based methodology in detail.

Read Next

Amnesty International takes on Google over Chinese censored search engine, Project Dragonfly.