In Part 1, we covered the basics of doing text mining in R by selecting data, preparing it, cleaning, then performing various operations on it to visualize that data. In this post we look at a simple use case showing how we can derive real meaning and value from a visualization by seeing how a simple word cloud and help you understand the impact of an advertisement.

Building the document matrix

A common technique in text mining is using a matrix of documents terms called a document term matrix. A document term matrix is simply a matrix where columns are terms and rows are documents that contain the occurrence of specific terms within the document. Or if you reverse the order and have terms as rows and documents as columns it’s called a term document matrix. For example let’s say we have two documents D 1 and D2. For example let’s say we have the documents:

D1 = “I like cats”

D2 = “I hate cats”

Then the document term matrix would look like:

|

I |

like |

hate |

cats |

|

|

D1 |

1 |

1 |

0 |

1 |

|

D2 |

1 |

0 |

1 |

1 |

For our project to make a Document term matrix in R all you need to do is use the DocumentTermMatrix() like this:

tdm <- DocumentTermMatrix(mycorpus)You can see information on your document term matrix by using print like:

print(tdm)

<<DocumentTermMatrix (documents: 4688, terms: 18363)>>

Non-/sparse entries: 44400/86041344

Sparsity : 100%

Maximal term length: 65

Weighting : term frequency (tf)

Next because we need to sum up all the values in each term column so that we can drive the frequency of each term occurrence. We also want to sort those values from highest to lowest. You can use this code:

m <- as.matrix(tdm)

v <- sort(colSums(m),decreasing=TRUE)Next we will use the names() to pull the each term object’s name which in our case is a word. Then we want to build a dataframe from our words associated with their frequency of occurrences. Finally we want to create our word cloud but remove any terms that have an occurrence of less than 45 times to reduce clutter in our wordcloud. You could also use max.words to limit the total number of words in your word cloud. So your final code should look like this:

words <- names(v)

d <- data.frame(word=words, freq=v)

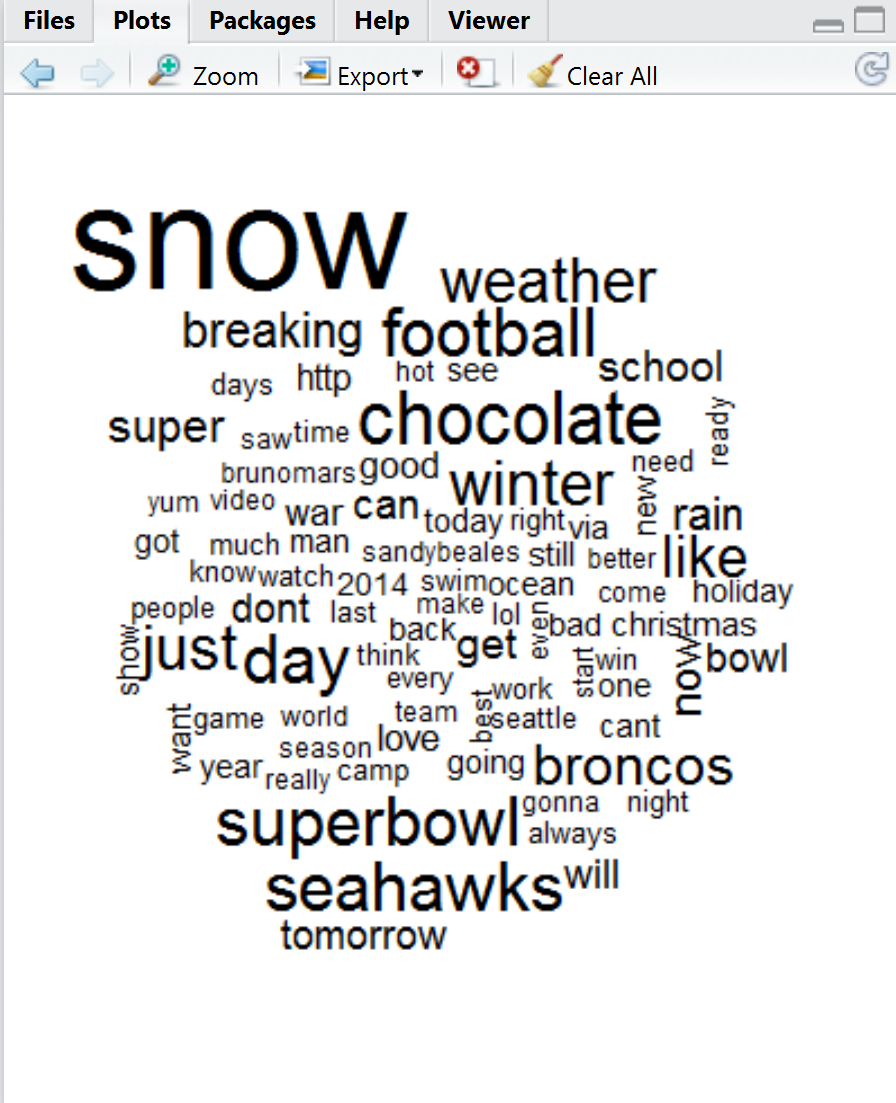

wordcloud(d$word,d$freq,min.freq=45)If you run this in R studio you should see something like the figure which shows the words with highest occurrence in our corpus. The wordcloud object automatically scales the drawn words by the size of their frequency value. From here you can do a lot with your word cloud including change the scale, associate color to various values, and much more. You can read more about wordcloud here.

While word clouds are often used on the web for things like blogs, news sites, and other similar use cases they have real value for data analysis beyond just visual indicators for users to find terms of interest. For example if you look at the word cloud we generated you will notice that one of the most popular terms mentioned in tweets is chocolate. Doing a short inspection of our CSV document for the term chocolate we find a lot of people mentioning the word in a variety of contexts but one of the most common is in relationship to a specific super bowl add. For example here is a tweet:

|

Alexalabesky |

41673.39 |

Chocolate chips and peanut butter |

0 |

0 |

0 |

Unknown |

Unknown |

Unknown |

Unknown |

Unknown |

This appeared after the airing of this advertisement from Butterfinger. So even with this simple R code we can generate real meaning from social media which is the measurable impact of an advertisement during the Super Bowl.

Summary

In this post we looked at a simple use case showing how we can derive real meaning and value from a visualization by seeing how a simple word cloud and help you understand the impact of an advertisement.

About the author

Robi Sen, CSO at Department 13, is an experienced inventor, serial entrepreneur, and futurist whose dynamic twenty-plus year career in technology, engineering, and research has led him to work on cutting edge projects for DARPA, TSWG, SOCOM, RRTO, NASA, DOE, and the DOD. Robi also has extensive experience in the commercial space, including the co-creation of several successful start-up companies. He has worked with companies such as UnderArmour, Sony, CISCO, IBM, and many others to help build out new products and services. Robi specializes in bringing his unique vision and thought process to difficult and complex problems allowing companies and organizations to find innovative solutions that they can rapidly operationalize or go to market with.

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)