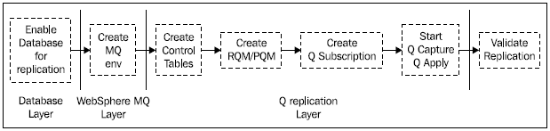

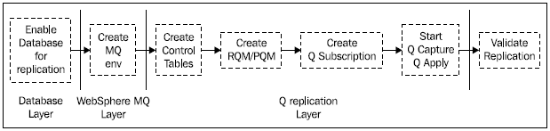

The individual stages for the different layers are shown in the following diagram:

The DB2 database layer

The first layer is the DB2 database layer, which involves the following tasks:

- For unidirectional replication and all replication scenarios that use unidirectional replication as the base, we need to enable the source database for archive logging (but not the target table). For multi-directional replication, all the source and target databases need to be enabled for archive logging.

- We need to identify which tables we want to replicate. One of the steps is to set the DATA CAPTURE CHANGES flag for each source table, which will be done automatically when the Q subscription is created. This setting of the flag will affect the minimum point in time recovery value for the table space containing the table, which should be carefully noted if table space recoveries are performed.

Before moving on to the WebSphere MQ layer, let’s quickly look at the compatibility requirements for the database name, the table name, and the column names. We will also discuss whether or not we need unique indexes on the source and target tables.

Database/table/column name compatibility

In Q replication, the source and target database names and table names do not have to match on all systems. The database name is specified when the control tables are created. The source and target table names are specified in the Q subscription definition.

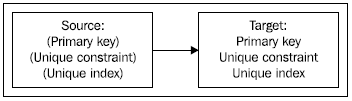

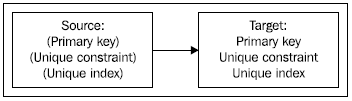

Now let’s move on to looking at whether or not we need unique indexes on the source and target tables. We do not need to be able to identify unique rows on the source table, but we do need to be able to do this on the target table. Therefore, the target table should have one of:

- Primary key

- Unique contraint

- Unique index

If none of these exist, then Q Apply will apply the updates using all columns.

However, the source table must have the same constraints as the target table, so any constraints that exist at the target must also exist at the source, which is shown in the following diagram:

The WebSphere MQ layer

This is the second layer we should install and test—if this layer does not work then Q replication will not work!

We can either install the WebSphere MQ Server code or the WebSphere MQ Client code. Throughout this book, we will be working with the WebSphere MQ Server code.

If we are replicating between two servers, then we need to install WebSphere MQ Server on both servers. If we are installing WebSphere MQ Server on UNIX, then during the installation process a user ID and group called mqm are created. If we as a DBA want to issue MQ commands, then we need to get our user ID added to the mqm group.

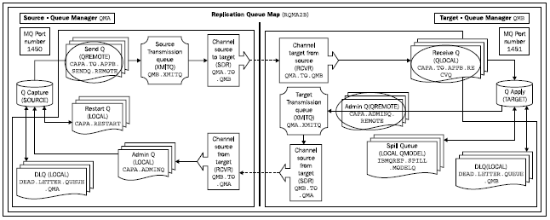

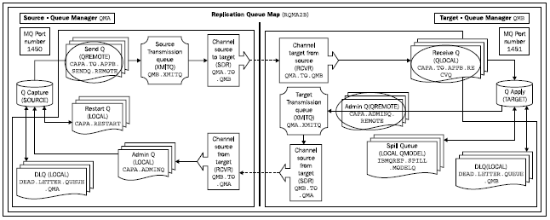

Assuming that WebSphere MQ Server has been successfully installed, we now need to create the Queue Managers and the queues that are needed for Q replication. This section also includes tests that we can perform to check that the MQ installation and setup is correct. The following diagram shows the MQ objects that need to be created for unidirectional replication:

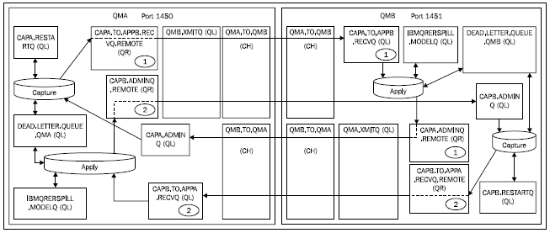

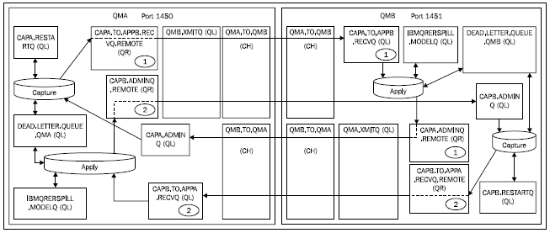

The following figure shows the MQ objects that need to be created for bidirectional replication:

There is a mixture of Local Queue (LOCAL/QL) and Remote Queues (QREMOTE/QR) in addition to Transmission Queues (XMITQ) and channels.

Once we have successfully completed the installation and testing of WebSphere MQ, we can move on to the next layer—the Q replication layer.

The Q replication layer

This is the third and final layer, which comprises the following steps:

- Create the replication control tables on the source and target servers.

- Create the transport definitions. What we mean by this is that we somehow need to tell Q replication what the source and target table names are, what rows/columns we want to replicate, and which Queue Managers and queues to use.

Some of the terms that are covered in this section are:

- Logical table

- Replication Queue Map

- Q subscription

- Subscription group (SUBGROUP)

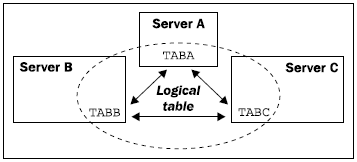

What is a logical table?

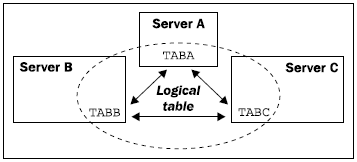

In Q replication, we have the concept of a logical table, which is the term used to refer to both the source and target tables in one statement. An example in a peer-to-peer three-way scenario is shown in the following diagram, where the logical table is made up of tables TABA, TABB, and TABC:

Unlock access to the largest independent learning library in Tech for FREE!

Get unlimited access to 7500+ expert-authored eBooks and video courses covering every tech area you can think of.

Renews at $19.99/month. Cancel anytime

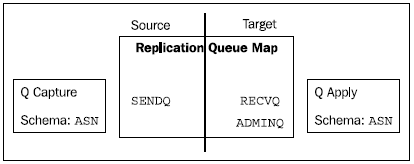

What is a Replication/Publication Queue Map?

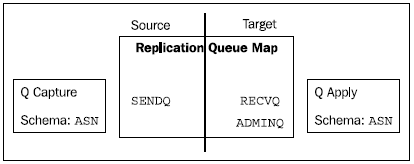

The first part of the transport definitions mentioned earlier is a definition of Queue Map, which identifies the WebSphere MQ queues on both servers that are used to communicate between the servers. In Q replication, the Queue Map is called a Replication Queue Map, and in Event Publishing the Queue Map is called a Publication Queue Map.

Let’s first look at Replication Queue Maps (RQMs). RQMs are used by Q Capture and Q Apply to communicate. This communication is Q Capture sending Q Apply rows to apply and Q Apply sending administration messages back to Q Capture. Each RQM is made up of three queues: a queue on the local server called the Send Queue (SENDQ), and two queues on the remote server—a Receive Queue (RECVQ) and an Administration Queue (ADMINQ), as shown in the preceding figures showing the different queues. An RQM can only contain one each of SENDQ, RECVQ, and ADMINQ.

The SENDQ is the queue that Q Capture uses to send source data and informational messages.

The RECVQ is the queue that Q Apply reads for transactions to apply to the target table(s).

The ADMINQ is the queue that Q Apply uses to send control messages back to Q Capture.

So using the queues in the first “Queues” figure, the Replication Queue Map definition would be:

- Send Queue (SENDQ): CAPA.TO.APPB.SENDQ.REMOTE on Source

- Receive Queue (RECVQ): CAPA.TO.APPB.RECVQ on Target

- Administration Queue (ADMINQ): CAPA.ADMINQ.REMOTE on Target

Now let’s look at Publication Queue Maps (PQMs). PQMs are used in Event Publishing and are similar to RQMs, in that they define the WebSphere MQ queues needed to transmit messages between two servers. The big difference is that because in Event Publishing, we do not have a Q Apply component, the definition of a PQM is made up of only a Send Queue.

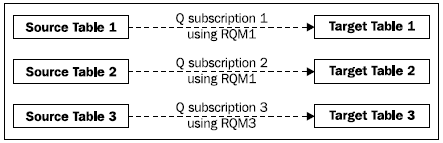

What is a Q subscription?

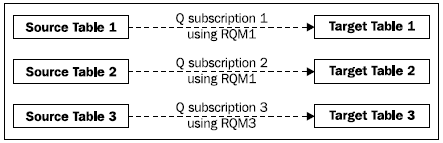

The second part of the transport definitions is a definition called a Q subscription, which defines a single source/target combination and which Replication Queue Map to use for this combination. We set up one Q subscription for each source/target combination.

Each Q subscription needs a Replication Queue Map, so we need to make sure we have one defined before trying to create a Q subscription. Note that if we are using the Replication Center, then we can choose to create a Q subscription even though a RQM does not exist. The wizard will walk you through creating the RQM at the point at which it is needed.

The structure of a Q subscription is made up of a source and target section, and we have to specify:

- The Replication Queue Map

- The source and target table

- The type of target table

- The type of conflict detection and action to be used

- The type of initial load, if any, should be performed

If we define a Q subscription for unidirectional replication, then we can choose the name of the Q subscription—for any other type of replication we cannot.

Q replication does not have the concept of a subscription set as there is in SQL Replication, where the subscription set holds all the tables which are related using referential integrity.

In Q replication, we have to ensure that all the tables that are related through referential integrity use the same Replication Queue Map, which will enable Q Apply to apply the changes to the target tables in the correct sequence.

In the following diagram, Q subscription 1 uses RQM1, Q subscription 2 also uses RQM1, and Q subscription 3 uses RQM3:

What is a subscription group?

A subscription group is the name for a collection of Q subscriptions that are involved in multi-directional replication, and is set using the SET SUBGROUP command.

Q subscription activation

In unidirectional, bidirectional, and peer-to-peer two-way replication, when Q Capture and Q Apply start, then the Q subscription can be automatically activated (if that option was specified). For peer-to-peer three-way replication and higher, when Q Capture and Q Apply are started, only a subset of the Q subscriptions of the subscription group starts automatically, so we need to manually start the remaining Q subscriptions.

United States

United States

Great Britain

Great Britain

India

India

Germany

Germany

France

France

Canada

Canada

Russia

Russia

Spain

Spain

Brazil

Brazil

Australia

Australia

Singapore

Singapore

Canary Islands

Canary Islands

Hungary

Hungary

Ukraine

Ukraine

Luxembourg

Luxembourg

Estonia

Estonia

Lithuania

Lithuania

South Korea

South Korea

Turkey

Turkey

Switzerland

Switzerland

Colombia

Colombia

Taiwan

Taiwan

Chile

Chile

Norway

Norway

Ecuador

Ecuador

Indonesia

Indonesia

New Zealand

New Zealand

Cyprus

Cyprus

Denmark

Denmark

Finland

Finland

Poland

Poland

Malta

Malta

Czechia

Czechia

Austria

Austria

Sweden

Sweden

Italy

Italy

Egypt

Egypt

Belgium

Belgium

Portugal

Portugal

Slovenia

Slovenia

Ireland

Ireland

Romania

Romania

Greece

Greece

Argentina

Argentina

Netherlands

Netherlands

Bulgaria

Bulgaria

Latvia

Latvia

South Africa

South Africa

Malaysia

Malaysia

Japan

Japan

Slovakia

Slovakia

Philippines

Philippines

Mexico

Mexico

Thailand

Thailand