AI Perception is a system within Unreal Engine 4 that allows sources to register

their senses to create stimuli, and then other listeners are periodically updated

as the sense stimuli is created within the system. This works wonders for creating a

reusable system that can react to an array of customizable sensors.

In this tutorial, we will explore different components available within

Unreal Engine 4 to enable artificial intelligence sensing within our games.

We will do this by taking advantage of a system within Unreal Engine called AI Perception components. These components can be customized and even scripted to introduce new behavior by extending the current sensing interface.

This tutorial is an excerpt taken from the book ‘Unreal Engine 4 AI Programming Essentials’ written by Peter L. Newton, Jie Feng.

Let’s now have a look at AI sensing.

Implementing AI sensing in Unreal Engine 4

Let’s start by bringing up Unreal Engine 4 and open our New Project window. Then,

perform the following steps:

- First, name our new project AI Sense and hit create project. After it finishes loading, we want to start by creating a new AIController that will be responsible for sending our AI the appropriate instructions.

- Let’s navigate to the Blueprint folder and create a new AIController class, naming it EnemyPatrol.

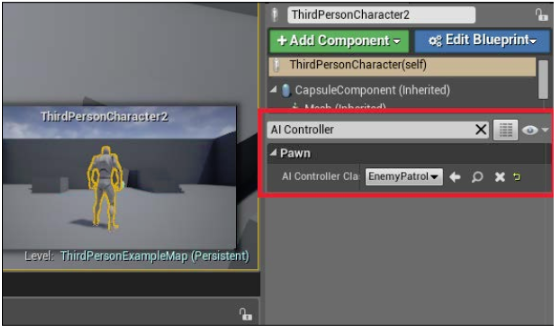

- Now, to assign EnemyPatrol, we need to place a pawn into the world then

assign the controller to it. - After placing the pawn, click on the Details tab within the editor. Next, we want to search for AI Controller. By default, it is the parent class AI Controller, but we want this to be EnemyPatrol:

- Next, we will create a new PlayerController named PlayerSense.

- Then, we need to introduce the AI Perception component to those who we want to be seen by or to see. Let’s open the PlayerSense controller first and then add the necessary components.

Building AI Perception components

There are two components that are currently available within the Unreal Engine Framework. The first one is the AI Perception component that listens for perception of stimulants (sight, hearing, etc.) The other is the AIPerceptionStimuliSource component. It is used to easily register the pawn as a source of stimuli, allowing it to be detected by other AI Perception components. This comes in handy, particularly in our case. Now, follow these steps:

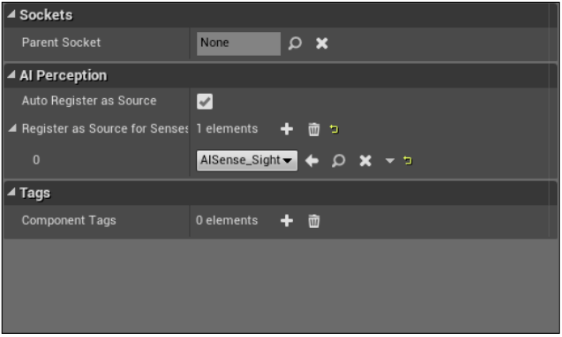

- With PlayerSense open, let’s add a new component called AIPerceptionStimuliSource. Then, under the Details tab, let’s select AutoRegister as Source.

- Next, we want to add new senses to create a source for. So, looking at Register as Source for Senses, there is an AISense array.

- Populate this array with the AISense_Sight blueprint in order to be detected by sight by other AI Perception components. You will note that there are also other senses to choose from—for example, AISense_Hearing, AISense_Touch, and so on.

The complete settings are shown in the following screenshot:

This was pretty straightforward considering our next process. This allows our player pawn to be detected by Enemy AI whenever we get within their sense’s configured range.

Next, let’s open our EnemyPatrol class and add the other AI Perception components to our AI. This component is called AIPerception and contains many other configurations, allowing you to customize and tailor the AI for different scenarios:

- Clicking on the AI Perception component, you will notice that under the AI section, everything is grayed out. This is because we have configurations specific to each sense. This also goes if you create your own AI Sense classes.

- Let’s focus on two sections within this component: the first is the AI Perception settings, and the other is the event provided with this component:

- The AI Perception section should look similar to the same section on AIPerceptionStimuliSource. The differences are that you have to register your senses, and you can also specify a dominant sense. The dominant sense takes precedence of other senses determined in the same location.

- Let’s look at the Senses configuration and add a new element. This will populate the array with a new sense configuration, which you can then modify.

- For now, let’s select the AI Sight configuration, and then we can leave the default values as the same. In the game, we are able to visualize the configurations, allowing us to have more control over our senses.

- There is another configuration that allows you to specify affiliation, but at the time of writing this, these options aren’t available.

- When you click on Detection by Affiliation, you must select Detect Neutrals to detect any pawn with Sight Sense Source.

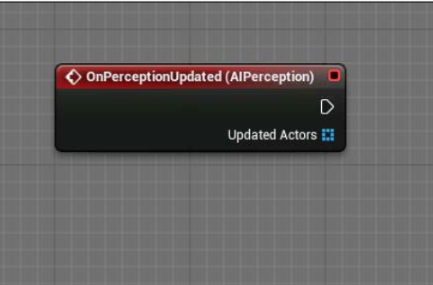

- Next, we need to be able to notify our AI of a new target. We will do this by utilizing the Event we saw as part of the AI Perception component. By navigating there, we can see an event called OnPerceptionUpdated.

This will be updated when there are changes in the sensory state which makes the tracking of senses easy and straightforward. Let’s move toward the OnPerceptionUpdated event and perform the following:

- Click on OnPerceptionUpdated and create it within the EventGraph. Now, within the EventGraph, whenever this event is called, changes will be made to the senses, and it will return the available sensed actors, as shown in the following screenshot:

Now that we understand how we will obtain our referenced sensed actors, we should create a way for our pawn to maintain different states of being similar to what we would do in Behavior Tree.

- Let’s first establish a home location for our pawn to run to when the player is

no longer detected by the AI. In the same Blueprint folder, we will create a subclass of Target Point. Let’s name this Waypoint and place it at an appropriate location within the world. - Now, we need to open this Waypoint subclass and create additional variables

to maintain traversable routes. We can do this by defining the next waypoint

within a waypoint, allowing us to create what programmers call a linked list.

This results in the AI being able to continuously move to the next available

route after reaching the destination of its current route.

- With Waypoint open, add a new variable named NextWaypoint and make

the type of this be the same as that of the Waypoint class we created. - Navigate back to our Content Browser.

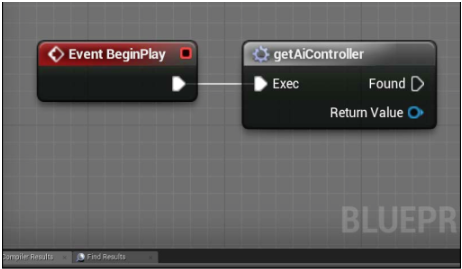

- Now, within our EnemyPatrol AIController, let’s focus on Event Begin in

EventGraph. We have to grab the reference to the waypoint we created

earlier and store it within our AIController. - So, let’s create a new waypoint variable type and name it CurrentPoint.

- Now, on Event Begin Play, the first thing we need is the AIController, which

is the self -reference for this EventGraph because we are in the AIController

Class. - So, let’s grab our self-reference and check whether it is valid. Safety first!

Next, we will get our AIController from our self-reference. Then, again for

safety, let’s check whether our AIController is valid.How does our AI sense? - Next, we want to create a Get all Actors Of Class node and set the Actor

class to Waypoint. - Now, we need to convert a few instructions into a macro because we will use the instructions throughout the project. So, let’s select the nodes shown as follows and hit convert to macro. Lastly, rename this variable getAIController. You can see the final nodes in the following screenshot:

- Next, we want our AI to grab a random new route and set it as a new

variable. So, let’s first get the length of the array of actors returned. Then,

we want to subtract 1 from this length, and this will give us the range of

our array. - From there, we want to pull from Subtract and get Random Integer. Then,

from our array, we want to get the Get node and pump our Random Integer

node into the index to retrieve. - Next, pull the returned available variable from the Get node and promote it to a local variable. This will automatically create the type dragged from thepin, and we want to rename this Current Point to understand why this variable exists.

- Then, from our getAIController macro, we want to assign the ReceiveMoveCompleted event. This is done so that when our AI successfully moves to the next route, we can update the information and tell our AI to move to the next route.

We learned AI sensing in Unreal Engine 4 with the help of a system within Unreal Engine called AI Perception components. We also explored different components within that system.

If you found this post useful, be sure to check out the book, ‘Unreal Engine 4 AI Programming Essentials’ for more concepts on AI sensing in Unreal Engine.

Read Next

Development Tricks with Unreal Engine 4

What’s new in Unreal Engine 4.19?

Unreal Engine 4.20 released with focus on mobile and immersive (AR/VR/MR) devices

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)

![Using Python Automation to interact with network devices [Tutorial] Why choose Ansible for your automation and configuration management needs?](https://hub.packtpub.com/wp-content/uploads/2018/03/Image_584-100x70.png)