TensorFlow 2 is a rich development ecosystem composed of two main parts: Training and Serving. Training consists of a set of libraries for dealing with datasets (tf.data), a set of libraries for building models, including high-level libraries (tf.Keras and Estimators), low-level libraries (tf.*), and a collection of pretrained models (tf.Hub). Training can happen on CPUs, GPUs, and TPUs via distribution strategies and the result can be saved using the appropriate libraries.

This article is an excerpt from the book, Deep Learning with TensorFlow 2 and Keras, Second Edition by Antonio Gulli, Amita Kapoor, and Sujit Pal. This book teaches deep learning techniques alongside TensorFlow (TF) and Keras. In this article, we’ll review the addition of the powerful new feature, distributed training, in TensorFlow 2.x.

One very useful addition to TensorFlow 2.x is the possibility to train models using distributed GPUs, multiple machines, and TPUs in a very simple way with very few additional lines of code. tf.distribute.Strategy is the TensorFlow API used in this case and it supports both tf.keras and tf.estimator APIs and eager execution. You can switch between GPUs, TPUs, and multiple machines by just changing the strategy instance. Strategies can be synchronous, where all workers train over different slices of input data in a form of sync data parallel computation, or asynchronous, where updates from the optimizers are not happening in sync. All strategies require that data is loaded in batches via the tf.data.Dataset api.

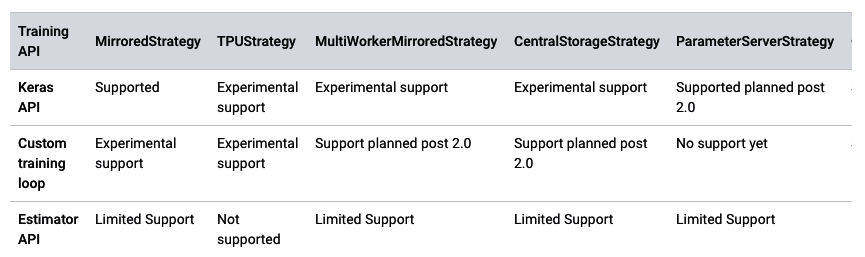

Note that the distributed training support is still experimental. A roadmap is given in Figure 1:

Figure 1: Distributed training support fr different strategies and APIs

Let’s discuss in detail all the different strategies reported in Figure 1.

Multiple GPUs

TensorFlow 2.x can utilize multiple GPUs. If we want to have synchronous distributed training on multiple GPUs on one machine, there are two things that we need to do: (1) We need to load the data in a way that will be distributed into the GPUs, and (2) We need to distribute some computations into the GPUs too:

- In order to load our data in a way that can be distributed into the GPUs, we simply need

tf.data.Dataset(which has already been discussed in the previous paragraphs). If we do not have atf.data.Datasetbut we have a normal tensor, then we can easily convert the latter into the former usingtf.data.Dataset.from_tensors_slices(). This will take a tensor in memory and return a source dataset, the elements of which are slices of the given tensor. In our toy example, we use NumPy to generate training data x and labels y, and we transform it intotf.data.Dataset withtf.data.Dataset.from_tensor_slices(). Then we apply a shuffle to avoid bias in training across GPUs and then generateSIZE_BATCHESbatches:

import tensorflow as tf import numpy as np from tensorflow import keras N_TRAIN_EXAMPLES = 1024*1024 N_FEATURES = 10 SIZE_BATCHES = 256 # 10 random floats in the half-open interval [0.0, 1.0). x = np.random.random((N_TRAIN_EXAMPLES, N_FEATURES)) y = np.random.randint(2, size=(N_TRAIN_EXAMPLES, 1)) x = tf.dtypes.cast(x, tf.float32) print (x) dataset = tf.data.Dataset.from_tensor_slices((x, y)) dataset = dataset.shuffle(buffer_size=N_TRAIN_EXAMPLES).batch(SIZE_BATCHES)

- In order to distribute some computations to GPUs, we instantiate a

distribution = tf.distribute.MirroredStrategy()object, which supports synchronous distributed training on multiple GPUs on one machine. Then, we move the creation and compilation of the Keras model inside the strategy.scope(). Note that each variable in the model is mirrored across all the replicas. Let’s see it in our toy example:

# this is the distribution strategy distribution = tf.distribute.MirroredStrategy() # this piece of code is distributed to multiple GPUs with distribution.scope(): model = tf.keras.Sequential() model.add(tf.keras.layers.Dense(16, activation=‘relu’, input_shape=(N_FEATURES,))) model.add(tf.keras.layers.Dense(1, activation=‘sigmoid’)) optimizer = tf.keras.optimizers.SGD(0.2) model.compile(loss=‘binary_crossentropy’, optimizer=optimizer) model.summary() # Optimize in the usual way but in reality you are using GPUs. model.fit(dataset, epochs=5, steps_per_epoch=10)

Note that each batch of the given input is divided equally among the multiple GPUs. For instance, if using MirroredStrategy() with two GPUs, each batch of size 256 will be divided among the two GPUs, with each of them receiving 128 input examples for each step. In addition, note that each GPU will optimize on the received batches and the TensorFlow backend will combine all these independent optimizations on our behalf. In short, using multiple GPUs is very easy and requires minimal changes to the tf.Keras code used for a single server.

MultiWorkerMirroredStrategy

This strategy implements synchronous distributed training across multiple workers, each one with potentially multiple GPUs. As of September 2019 the strategy works only with Estimators and it has experimental support for tf.Keras. This strategy should be used if you are aiming at scaling beyond a single machine with high performance. Data must be loaded with tf.Dataset and shared across workers so that each worker can read a unique subset.

TPUStrategy

This strategy implements synchronous distributed training on TPUs. TPUs are Google’s specialized ASICs chips designed to significantly accelerate machine learning workloads in a way often more efficient than GPUs. According to this public information (https://github.com/tensorflow/tensorflow/issues/24412):

“the gist is that we intend to announce support for TPUStrategy alongside Tensorflow 2.1. Tensorflow 2.0 will work under limited use-cases but has many improvements (bug fixes, performance improvements) that we’re including in Tensorflow 2.1, so we don’t consider it ready yet.”

ParameterServerStrategy

This strategy implements either multi-GPU synchronous local training or asynchronous multi-machine training. For local training on one machine, the variables of the models are placed on the CPU and operations are replicated across all local GPUs. For multi-machine training, some machines are designated as workers and some as parameter servers with the variables of the model placed on parameter servers. Computation is replicated across all GPUs of all workers. Multiple workers can be set up with the environment variable TF_CONFIG as in the following example:

os.environ[“TF_CONFIG”] = json.dumps({ “cluster”: { “worker”: [“host1:port”, “host2:port”, “host3:port”], “ps”: [“host4:port”, “host5:port”] }, “task”: {“type”: “worker”, “index”: 1} })

In this article, we have seen how it is possible to train models using distributed GPUs, multiple machines, and TPUs in a very simple way with very few additional lines of code. Learn how to build machine and deep learning systems with the newly released TensorFlow 2 and Keras for the lab, production, and mobile devices with Deep Learning with TensorFlow 2 and Keras, Second Edition by Antonio Gulli, Amita Kapoor and Sujit Pal.

About the Authors

Antonio Gulli is a software executive and business leader with a passion for establishing and managing global technological talent, innovation, and execution. He is an expert in search engines, online services, machine learning, information retrieval, analytics, and cloud computing.

Amita Kapoor is an Associate Professor in the Department of Electronics, SRCASW, University of Delhi and has been actively teaching neural networks and artificial intelligence for the last 20 years. She is an active member of ACM, AAAI, IEEE, and INNS. She has co-authored two books.

Sujit Pal is a technology research director at Elsevier Labs, working on building intelligent systems around research content and metadata. His primary interests are information retrieval, ontologies, natural language processing, machine learning, and distributed processing. He is currently working on image classification and similarity using deep learning models. He writes about technology on his blog at Salmon Run.

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)