In this article, Aurobindo Sarkar, the author of the book, Learning Spark SQL, we will be covering the following points to introduce you to using Spark SQL for exploratory data analysis.

- What is exploratory Data Analysis (EDA)?

- Why EDA is important?

- Using Spark SQL for basic data analysis

- Visualizing data with Apache Zeppelin

Introducing Exploratory Data Analysis (EDA)

Exploratory Data Analysis (EDA), or Initial Data Analysis (IDA), is an approach to data analysis that attempts to maximize insight into data. This includes assessing the quality and structure of the data, calculating summary or descriptive statistics, and plotting appropriate graphs. It can uncover underlying structures and suggest how the data should be modeled. Furthermore, EDA helps us detect outliers, errors, and anomalies in our data, and deciding what to do about such data is often more important than other more sophisticated analysis. EDA enables us to test our underlying assumptions, discover clusters and other patterns in our data, and identify possible relationships between various variables. A careful EDA process is vital to understanding the data and is sometimes sufficient to reveal such poor data quality that a more sophisticated model-based analysis is not justified.

Typically, the graphical techniques used in EDA are simple, consisting of plotting the raw data and simple statistics. The focus is on the structures and models revealed by the data or best fit the data. EDA techniques include scatter plots, box plots, histograms, probability plots, and so on. In most EDA techniques, we use all of the data, without making any underlying assumptions. The analyst builds intuition, or gets a “feel”, for the data set as a result of such exploration. More specifically, the graphical techniques allow us to, efficiently, select and validate appropriate models, test our assumptions, identify relationships, select estimators, and detect outliers.

EDA involves a lot of trial and error, and several iterations.The best way is to start simple and then build in complexity as you go along. There is a major trade-off in modeling between the simple and the more accurate ones. Simple models may be much easier to interpret and understand. These models can get you to 90% accuracy very quickly, versus a more complex model that might take weeks or months to get you an additional 2% improvement.For example, you should plot simple histograms and scatterplots to quickly start developing an intuition for your data.

Using Spark SQL for basic data analysis

Interactively, processing and visualizing large data is challenging as the queries can take long to execute and the visual interface cannot accommodate as many pixels as data points. Spark supports in-memory computations and a high degree of parallelism to achieve interactivity with large distributed data. In addition, Spark is capable of handling petabytes of data and provides a set of versatile programming interfaces and libraries. These include SQL, Scala, Python, Java, and R APIs, and libraries for distributed statistics and machine learning.

For data that fits into a single computer there are many good tools available such as R, MATLAB, and others. However, if the data does not fit into a single machine, or if it is very complicated to get the data to that machine, or if a single computer cannot easily process the data then this section will offer some good tools and techniques data exploration.

Here, we will do some basic data exploration exercises to understand a sample dataset. We will use a dataset that contains data related to direct marketing campaigns (phone calls) of a Portuguese banking institution. The marketing campaigns were based on phone calls to customers. We use the bank-additional-full.csv file that contains 41188 records and 20 input fields, ordered by date (from May 2008 to November 2010).

- As a first step, let’s define a schema and read in the CSV file to create a DataFrame. You can use :paste to paste-in the initial set of statements in the Spark shell, as shown in the following figure:

- After the DataFrame is created, we first verify the number of records.

- We can also define a case class called Call for our input records, and then create a strongly-typed Dataset as follows:

In the next section, we will begin our data exploration by identifying missing data in our dataset.

Identifying Missing Data

Missing data can occur in datasets due to reasons ranging from negligence to a refusal on part of respondants to provide a specific data point. However, in all cases missing data is a common occurrence in real-world datasets. Missing data can create problems in data analysis and sometimes lead to wrong decisions or conclusions. Hence, it is very important to identify missing data and devise effective strategies for dealing with it.

Here, weanalyzethe numbers of records with missing data fields in our sample dataset. In order to simulate missing data, we will edit our sample dataset by replacing fields containing “unknown” values with empty strings.

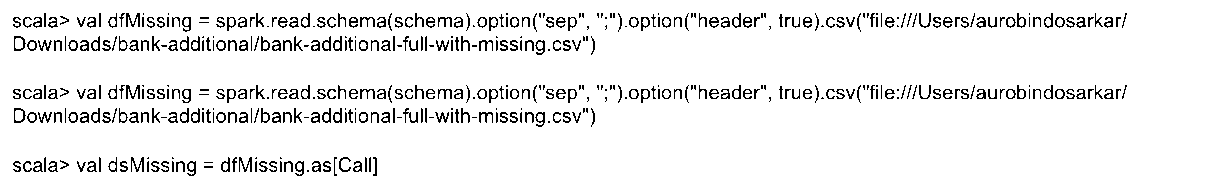

First, we created a DataFrame / Dataset from our edited file, as shown in the following figure:

The following two statements give us a count of rows with certain fields having missing data.

Later, we will look at effective ways of dealing with missing data and compute some basic statistics for sample dataset to improve our understanding of the data.

Computing basic statistics

Computing basic statistics is essential for a good preliminary understanding of our data. First, for convenience, we create a case class and a dataset containing a subset of fields from our original DataFrame. In our following example, we choose some of the numeric fields and the outcome field that is the “term deposit subscribed” field.

Next, we use describe to quickly compute the count, mean, standard deviation, min and max values for the numeric columns in our dataset.

Further, we use the stat package to compute additional statistics like covariance, correlation, creating crosstabs, examining items that occur most frequently in data columns, and computing quantiles. These computations are shown in the following figure:

Next, we use the typed aggregation functions to summarize our data to understand our data better. In the following statement, we aggregate the results by whether a term deposit was subscribed along with total customers contacted, average number of calls made per customer, the average duration of the calls and the average number of previous calls made to such customers. The results are rounded to two decimal points.

Similarly, executing the following statement givessimilar results by customers’ age.

After getting a better understanding of our data by computing basic statistics, we shift our focus to identifying outliers in our data.

Identifying data outliers

An outlier or an anomalyis an observation of the data that deviates significantly from other observations in the dataset. Erroneous outliers are observations thatare distorted due to possible errors in the data-collection process. These outliers may exert undue influence on the results of statistical analysis, sothey should be identified using reliable detection methods prior to performing data analysis.

Many algorithms find outliers as a side-product of clustering algorithms.

However these techniques define outliers as points, which do not lie in clusters. The user has to model the data points using a statistical distributions, and the outliers identified depending on how they appear in relation to the underlying model. The main problem with these approaches is that during EDA, the user typically doesnot have enough knowledge about the underlying data distribution.

EDA, using a modeling and visualizing approach, is a good way of achieving a deeper intuition of our data. SparkMLlib supports a large (and growing) set of distributed machine learning algorithms to make this task simpler. In the following example, we use the k-means clustering algorithm to compute two clusters in our data.

Other distributed algorithms useful for EDA includeclassification, regression, dimensionality reduction, correlation and hypothesis testing.

Visualizing data with Apache Zeppelin

Typically, we will generate many graphs to verify our hunches about the data.A lot of thesequick and dirty graphs used during EDA are,ultimately, discarded. Exploratory data visualization is critical for data analysis and modeling. However, we often skip exploratory visualization with large data because it is hard. For instance, browsers, typically, cannot handle millions of data points.Hence we have to summarize, sample or model our data before we can effectively visualize it.

Traditionally, BI tools provided extensive aggregation and pivoting features to visualize the data. However, these tools typically used nightly jobs to summarize large volumes of data. The summarized data wassubsequently downloaded and visualizedon the practitioner’s workstations. Spark can eliminate many of these batch jobs to support interactive data visualization.

Here, we will explore some basic data visualization techniques using Apache Zeppelin. Apache Zeppelin is a web-based tool that supports interactive data analysis and visualization. It supports several language interpreters and comes with built-in Spark integration. Hence, it is quick and easy to get started with exploratory data analysis using Apache Zeppelin.

- You can download Appache Zeppelin from https://zeppelin.apache.org/. Unzip the package on your hard drive and start Zeppelin using the following command:

Aurobindos-MacBook-Pro-2:zeppelin-0.6.2-bin-allaurobindosarkar$ bin/zeppelin-daemon.sh start

- You should see the following message:

Zeppelin start [ OK]

- You should be able to see the Zeppelin home page at:

- Click on Create new note link, and specify a path and name for your notebook, as shown in the following figure:

- In the next step, we paste the same code as in the beginning of this article to create a DataFrame for our sample dataset.

- We can execute typical DataFrameoperations as shown in the following figure:

- Next, we create a table from our DataFrame and execute some SQL on it. The results of the SQL statements execution can be charted by clicking on the appropriatechart-type required. Here, we create bar charts as an illustrative example of summarizing and visualizing data:

- We can also plot a scatter plot, and read the coordinate values of each of the points plotted, as shown in the following two figures.

- Additionally, we can create a textbox that accepts input values to make experience interactive. In the following figure we create a textbox that can accept different values for the age parameter and the bar chart is updated, accordingly.

- Similarly, we can also create dropdown lists where the user can select the appropriate option, and the table of values or chart, automatically gets updated.

Summary

In this article, we demonstrated using Spark SQL for exploring datasets, performing basic data quality checks, generating samples and pivot tables, and visualizing data with Apache Zeppelin.

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)