At NeurIPS 2017, a group of Stanford and Google researchers presented a very intriguing study on how a neural network, CycleGAN learns to cheat. The researchers trained CycleGAN to transform aerial images into street maps, and vice versa. They found that the neural network learned to hide information about the original image inside the generated one in the form of a low-amplitude high-frequency signal, which almost appears to be noise. Using this information, the generator can then reproduce the original image and thus satisfy the cyclic consistency requirement.

What is CycleGAN?

CycleGAN is an algorithm for performing image-to-image translation where the neural network needs to learn the mapping between an input image and an output image with the help of a training set of aligned image pairs. What sets CycleGAN apart from other GAN algorithms is that it does not require paired training data. It translates images from a source domain X to a target domain Y without needing paired examples.

How CycleGAN was hiding information?

For the experiment, the researchers trained CycleGAN on a maps dataset that consisted of 1,000 aerial photographs X and 1,000 maps Y. After training for 500 epochs, the model produced two maps F : X → Y and G : Y → X that generated realistic samples from these image domains.

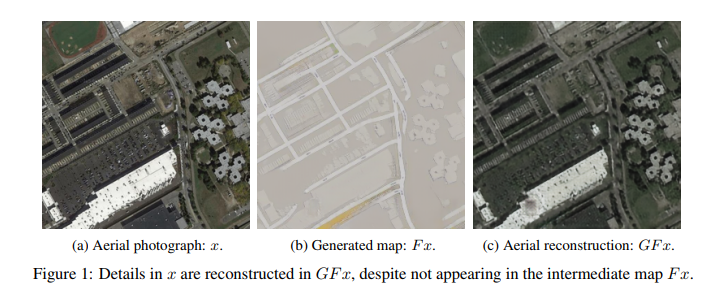

While training the model, the researchers used an aerial photograph that was unseen by the network. The generated image was nearly identical to the source images. On closer inspection, the researchers observed that there are various details present in both the original aerial photograph and the aerial reconstruction that are not visible in the intermediate map, as shown in the following figure:

The network showed this result with nearly every aerial photograph, even when it was trained on datasets other than maps. After making this observation, the researchers concluded that CycleGAN is learning an encoding scheme in which it hides information about the aerial photograph within the generated map.

What are the implications of this information hiding?

The researchers highlighted that this property of encoding information can make this model vulnerable to adversarial attacks. The study showed that CycleGAN can reconstruct any aerial image from a specifically crafted map by starting gradient descent from an initial source map. Attackers can misuse this fact and cause one of the learned transformations to produce an image of their choice by perturbing any chosen source image. Also, if the developers are not careful and are not taking proper measures, their models may be collecting personal data under GDPR.

How can these the attacks be avoided?

The vulnerability of this model is caused by two reasons: cyclic consistency loss and the difference in entropy between two domains. The cyclic consistency loss can be modified to prevent such attacks. The entropy of one of the domains can be increased artificially by adding an additional hidden variable.

The paper, ‘CycleGAN, a Master of Steganography’ grabbed attention when it was posted on Reddit and sparked a discussion. Many Redditors suggested a solution to this, and one of them said, “Adding nearly imperceptible gaussian noise between the cycles should be enough to prevent the CycleGAN from hiding information encoded in imperceptible high-frequency components: it forces it to encode all semantic information in whatever is able to survive low-amplitude gaussian noise (i.e. the visible low-frequency components, as we want/expect).”

A recent work towards reducing this steganographic behavior is the introduction of Augmented CycleGAN, which learns many-to-many mappings between domains unlike CycleGAN which learns one-to-one mappings.

To know more about this in detail, check out the paper: CycleGAN, a Master of Steganography.

Read Next

What are generative adversarial networks (GANs) and how do they work? [Video]

Video-to-video synthesis method: A GAN by NVIDIA & MIT CSAIL is now Open source

Generative Adversarial Networks: Generate images using Keras GAN [Tutorial]

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)