In this article, we will introduce Azure Stream Analytics, and show how to configure it. We will then look at some of key the advantages of the Stream Analytics platform including how it will enhance developer productivity, reduce and improve the Total Cost of Ownership (TCO) of building and maintaining a scaling streaming solution among other factors.

What is Azure Stream Analytics and how does it work?

Microsoft Azure Stream Analytics falls into the category of PaaS services where the customers don’t need to manage the underlying infrastructure. However, they are still responsible for and manage an application that they build on the top of PaaS service and more importantly the customer data.

Azure Stream Analytics is a fully managed server-less PaaS service that is built for real-time analytics computations on streaming data. The service can consume from a multitude of sources. Azure will take care of the hosting, scaling, and management of the underlying hardware and software ecosystem. The following are some of the examples of different use cases for Azure Stream Analytics.

When we are designing the solution that involves streaming data, in almost every case, Azure Stream Analytics will be part of a larger solution that the customer was trying to deploy. This can be real-time dashboarding for monitoring purposes or real-time monitoring of IT infrastructure equipment, preventive maintenance (auto-manufacturing, vending machines, and so on), and fraud detection. This means that the streaming solution needs to be thoughtful about providing out-of-the-box integration with a whole plethora of services that could help build a solution in a relatively quick fashion.

Let’s review a usage pattern for Azure Stream Analytics using a canonical model:

We can see devices and applications that generate data on the left in the preceding illustration that can connect directly or through cloud gateways to your stream ingest sources. Azure Stream Analytics can pick up the data from these ingest sources, augment it with reference data, run necessary analytics, gather insights and push them downstream for action. You can trigger business processes, write the data to a database or directly view the anomalies on a dashboard.

In the previous canonical pattern, the number of streaming ingest technologies are used; let’s review them in the following section:

- Event Hub: Global scale event ingestion system, where one can publish events from millions of sensors and applications. This will guarantee that as soon as an event comes in here, a subscriber can pick that event up within a few milliseconds. You can have one or more subscriber as well depending on your business requirements. A typical use case for an Event Hub is real-time financial fraud detection and social media sentiment analytics.

- IoT Hub: IoT Hub is very similar to Event Hub but takes the concept a lot further forward—in that you can take bidirectional actions. It will not only ingest data from sensors in real time but can also send commands back to them. It also enables you to do things like device management. Enabling fundamental aspects such as security is a primary need for IoT built with it.

- Azure Blob: Azure Blob is a massively scalable object storage for unstructured data, and is accessible through HTTP or HTTPS. Blob storage can expose data publicly to the world or store application data privately.

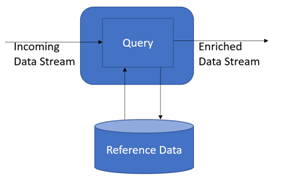

- Reference Data: This is auxiliary data that is either static or that changes slowly. Reference data can be used to enrich incoming data to perform correlation and lookups.

On the ingress side, with a few clicks, you can connect to Event Hub, IOT Hub, or Blob storage. The Streaming data can be enriched with reference data in the Blob store.

Data from the ingress process will be consumed by the Azure Stream Analytics service; we can call machine learning (ML) for event scoring in real time. The data can be egressed to live Dashboarding to Power BI, or could also push data back to Event Hub from where dashboards and reports can pick it up.

The following is a summary of the ingress, egress, and archiving options:

Ingress choices:

- Event Hub

- IoT Hub

- Blob storage

Egress choices:

- Live Dashboards:

- PowerBI

- Event Hub

Driving workflows:

- Event Hubs

- Service Bus

Archiving and post analysis:

- Blob storage

- Document DB

- Data Lake

- SQL Server

- Table storage

- Azure Functions

One key point to note is there the number of customers who push data from Stream Analytics processing (egress point) to Event Hub and then add Azure website-as hosted solutions into their own custom dashboard. One can drive workflows by pushing the events to Azure Service Bus and PowerBI.

For example, customer can build IoT support solutions to detect an anomaly in connected appliances and pushing the result into Azure Service Bus. A worker role can run as a daemon to pull the messages and create support tickets using Dynamics CRM API. Then use Power BI on the ticket can be archived for post analysis. This solution eliminates the need for the customer to log a ticket , but the system will automatically do it based on predefined anomaly thresholds. This is just one sample of real-time connected solution.

There are a number of use cases that don’t even involve real-time alerts. You can also use it to aggregate data, filter data, and store it in Blob storage, Azure Data Lake (ADL), Document DB, SQL, and then run U-SQL Azure Data Lake Analytics (ADLA), HDInsight, or even call ML models for things like predictive maintenance.

Configuring Azure Stream Analytics

Azure Stream Analytics (ASA) is a fully managed, cost-effective real-time event processing engine. Stream Analytics makes it easy to set up real-time analytic computations on data streaming from devices, sensors, websites, social media, applications, infrastructure systems, and more.

The service can be hosted with a few clicks in the Azure portal; users can author a Stream Analytics job specifying the input source of the streaming data, the output sink for the results of your job, and a data transformation expressed in a SQL-like language. The jobs can be monitored and you can adjust the scale/speed of the job in the Azure portal to scale from a few kilobytes to a gigabyte or more of events processed per second.

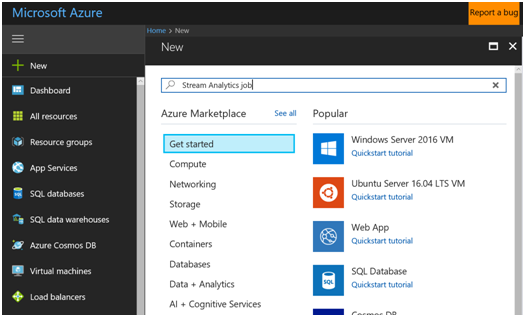

Let’s review how to configure Azure Stream Analytics step by step:

- Log in to the Azure portal using your Azure credentials, click on New, and search for Stream Analytics job:

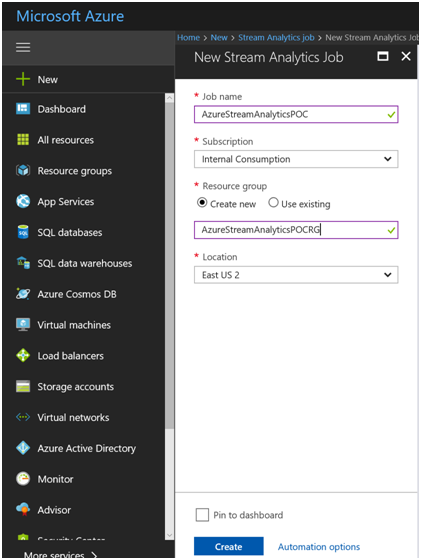

2. Click on Create to create an Azure Stream Analytics instance:

3. Provide a Job Name and Resource group name for the Azure Stream Analytics job deployment:

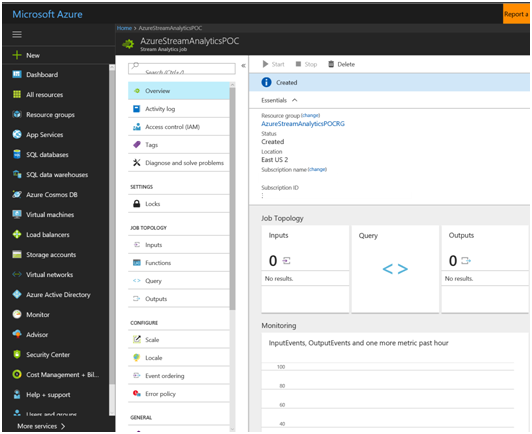

4. After a few minutes, the deployment will be complete:

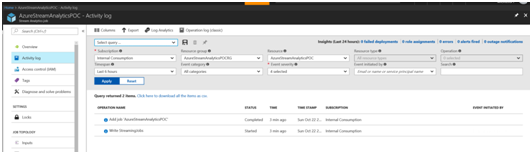

5. Review the following in the deployment–audit trail of the creation:

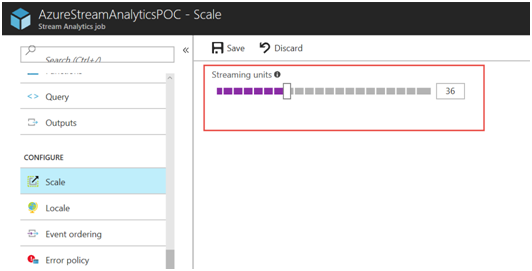

6. Ability stream up and down using a simple UI:

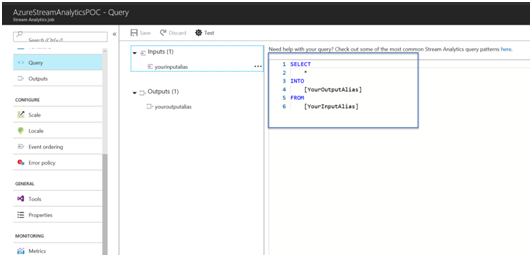

7. Build in the Query interface to run queries:

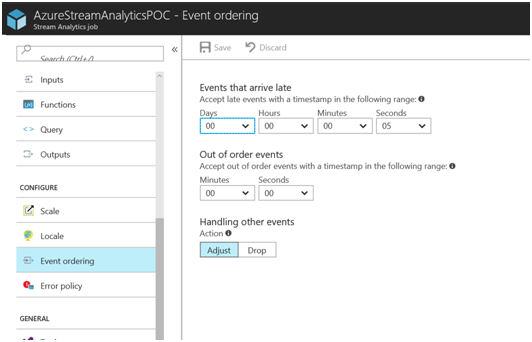

8. Run Queries using a SQL-like interface, with the ability to accept late-arriving events with simple GUI-based configuration:

8. Run Queries using a SQL-like interface, with the ability to accept late-arriving events with simple GUI-based configuration:

Key advantages of Azure Stream Analytics

Let’s quickly review how traditional streaming solutions are built; the core deployment starts with procuring and setting up the basic infrastructure necessary to host the streaming solution. Once this is done, we can then build the ingress and egress solution on top of the deployed infrastructure.

Once the core infrastructure is built, customer tools will be used to build business intelligence (BI) or machine-learning integration. After the system goes into production, scaling during runtime needs to be taken care of by capturing the telemetry and building and configuration of HW/SW resources as necessary. As business needs ramp up, so does the monitoring and troubleshooting.

Security

Azure Stream Analytics provides a number of inbuilt security mechanics in areas such as authentication, authorization, auditing, segmentation, and data protection. Let’s quickly review them.

- Authentication support: Authentication support in Azure Stream Analytics is done at portal level. Users should have a valid subscription ID and password to access the Azure Stream Analytics job.

- Authorization: Authorization is the process during login where users provide their credentials (for example, user account name and password, smart card and PIN, Secure ID and PIN, and so on) to prove their Microsoft identity so that they can retrieve their access token from the authentication server. Authorization is supported by Azure Stream Analytics. Only authenticated/authorized users can access the Azure Stream Analytics job.

- Support for encryption: Data-at-rest using client-side encryption and TDE. Support for key management: Key management is supported through ingress and egress points.

Programmer productivity

One of the key features of Azure Stream Analytics is developer productivity, and it is driven a lot by the query language that is based on SQL constructs. It provides a wide array of functions for analytics on streaming data, all the way from simple data manipulation functions, data and time functions, temporal functions, mathematical, string, scaling, and much more. It provides two features natively out of the box. Let’s review the features in detail in the next section

- Declarative SQL constructs

- Built-in temporal semantics

Declarative SQL constructs

A simple-to-use UI is provided and queries can be constructed using the provided user interface. The following is the feature set of the declarative SQL constructs:

- Filters (Where)

- Projections (Select)

- Time-window and property-based aggregates (Group By)

- Time-shifted joins (specifying time bounds within which the joining events must occur)

- All combinations thereof

The following is a summary of different constructs to manipulate streaming data:

Data manipulation: SELECT, FROM, WHERE GROUP BY, HAVING, CASE WHEN THEN ELSE, INNER/LEFT OUTER JOIN, UNION, CROSS/OUTER APPLY, CAST, INTO, ORDER BY ASC, DSC

Date and time functions: DateName, DatePart, Day, Month, Year, DateDiff, DateTimeFromParts, DateAdd

Temporal functions: Lag, IsFirst, LastCollectTop

Aggregate functions: SUM, COUNT, AVG, MIN, MAX, STDEV, STDEVP, VAR VARP, TopOne

Mathematical functions: ABS, CEILING, EXP, FLOOR POWER, SIGN, SQUARE, SQRT

String functions: Len, Concat, CharIndex Substring, Lower Upper, PatIndex

Scaling extensions: WITH, PARTITION BY OVER

Geospatial: CreatePoint, CreatePolygon, CreateLineString, ST_DISTANCE, ST_WITHIN, ST_OVERLAPS, ST_INTERSECTS

Built-in temporal semantics

Azure Stream Analytics provides prebuilt temporal semantics to query time-based information and merge streams with multiple timelines. Here is a list of temporal semantics:

- Application or ingest timestamp

- Windowing functions

- Policies for event ordering

- Policies to manage latencies between ingress sources

- Manage streams with multiple timelines

- Join multiple streams of temporal windows

- Join streaming data with data-at-rest

Lowest total cost of ownership

Azure Stream Analytics is a fully managed PaaS service on Azure. There are no upfront costs or costs involved in setting up computer clusters and complex hardware wiring like you would do with an on-prem solution. It’s a simple job service where there is no cluster provisioning and customers pay for what they use.

A key consideration is the variable workloads. With Azure Stream Analytics, you do not need to design your system for peak throughput and can add more compute footprint as you go.

If you have scenarios where data comes in spurts, you do not want to design a system for peak usage and leave it unutilized for other times. Let’s say you are building a traffic monitoring solution—naturally, there is the expectation that it will expect peaks to show up during morning and evening rush hours. However, you would not want to design your system or investments to cater to these extremes. Cloud elasticity that Azure offers is a perfect fit here.

Azure Stream Analytics also offers fast recovery by checkpointing and at-least-once event delivery.

Mission-critical and enterprise-less scalability and availability

Azure Stream Analytics is available across multiple worldwide data centers and sovereign clouds. Azure Stream Analytics promises 3-9s availability that is financially guaranteed with built-in auto recovery so that you will never lose the data. The good thing is customers do not need to write a single line of code to achieve this. The bottom-line is that enterprise readiness is built into the platform. Here is a summary of the Enterprise-ready features:

-

- Distributed scale-out architecture Ingests millions of events per second Accommodates variable loads

- Easily adds incremental resources to scale

- Available across multiple data centres and sovereign clouds

Global compliance

In addition, Azure Stream Analytics is compliant with many industries and government certifications. It is already HIPPA-compliant built-in and suitable to host healthcare applications. That’s how customers can scale up their businesses confidently. Here is a summary of global compliance:

ISO 27001

ISO 27018

SOC 1 Type 2

SOC 2 Type 2

SOC 3 Type 2

HIPAA/HITECH PCI DSS Level 1

European Union Model Clauses

China GB 18030

Thus we reviewed Azure Stream Analytics and understood its key advantages. These advantages included:

- Ease in terms of developer productivity,

- Ease of development and how to reduces total cost of ownership,

- Global compliance certifications,

- The value of the PaaS based streaming solution to host mission-critical applications and security

This post is taken from the book, Stream Analytics with Microsoft Azure, written by Anindita Basak, Krishna Venkataraman, Ryan Murphy, and Manpreet Singh. This book will help you to understand Azure Stream Analytics so that you can develop efficient analytics solutions that can work with any type of data.

Read Next

Say hello to Streaming Analytics

How to build a live interactive visual dashboard in Power BI with Azure Stream

Performing Vehicle Telemetry job analysis with Azure Stream Analytics tools

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)