A UX practitioner’s primary goal is to provide every user with the best possible experience of a product. The only way to do this is to connect repeatedly with users and make sure that the product is being designed according to their needs. At the beginning of a project, this research tends to be more exploratory. Towards the end of the project, it tends to be more about testing the product.

In this article, we explore in detail one of the most common methods of testing a product with users–the usability test. We will describe the steps to plan and conduct usability tests. Usability tests provide insights into how to practically plan, conduct, and analyze any user research.

This article is an excerpt from the book UX for the Web by Marli Ritter, and Cara Winterbottom. This book teaches you how UX and design thinking can make your site stand out from the rest of the sites on the internet.

Tips to maximize the value of user testing

Testing with users is not only about making their experience better; it is also about getting more people to use your product. People will not use a product that they do not find useful, and they will choose the product that is most enjoyable and usable if they have options. This is especially the case with the web. People leave websites if they can’t find or do things they want. Unlike with other products, they will not take time to work it out. Research by organizations such as the Nielsen Norman group generally shows that a website has between 5 and 10 seconds to show value to a visitor.

User testing is one of the main methods available to us to ensure that we make websites that are useful, enjoyable, and usable. However, to be effective it must be done properly. Jared Spool, a usability expert, identified seven typical mistakes that people make while testing with users, which lessen its value. The following list addresses how not to make those mistakes:

- Know why you’re testing: What are the goals of your test? Make sure that you specify the test goals clearly and concretely so that you choose the right method. Are you observing people’s behavior (usability test), finding out whether they like your design (focus group or sentiment analysis), or finding out how many do something on your website (web analytics)? Posing specific questions will help to formulate the goals clearly. For example, will the new content reduce calls to the service center? Or what percentage of users return to the website within a week?

- Design the right tasks: If your testing involves tasks, design scenarios that correspond to tasks users would actually perform. Consider what would motivate someone to spend time on your website, and use this to create tasks. Provide participants with the information they would have to complete the tasks in a real-life situation; no more and no less. For example, do not specify tasks using terms from your website interface; then participants will simply be following instructions when they complete the tasks, rather than using their own mental models to work out what to do.

- Recruit the right users: If you design and conduct a test perfectly, but test on people who are not like your users, then the results will not be valid. If they know too much or too little about the product, subject area, or technology, then they will not behave like your users would and will not experience the same problems. When recruiting participants, ask what qualities define your users, and what qualities make one person experience the website differently to another. Then recruit on these qualities. In addition, recruit the right number of users for your method. Ongoing research by the Nielsen Norman group and others indicate that usability tests typically require about five people per test, while A/B tests require about 40 people, and card sorting requires about 15 people. These numbers have been calculated to maximize the return on investment of testing.

- For example, five users in a usability test have been shown by the Nielsen Norman group (and confirmed repeatedly by other researchers) to find about 85% of the serious problems in an interface. Adding more users improves the percentage marginally, but increases the costs significantly. If you use the wrong numbers then your results will not be valid or the amount of data that you need to analyze will be unmanageable for the time and resources you have available.

- Get the team and stakeholders involved: If user testing is seen as an outside activity, most of the team will not pay attention as it is not part of their job and easy to ignore. When team members are involved, they gain insights into their own work and its effectiveness. Try to get team members to attend some of the testing if possible. Otherwise, make sure everyone is involved in preparing the goals and tasks (if appropriate) for the test. Share the results in a workshop afterward, so everyone can be involved in reflecting on the results and their implications.

- Facilitate the test well: Facilitating a test well is a difficult task. A good facilitator makes users feel comfortable so they act more naturally. At the same time, the facilitator must control the flow of the test so that everything is accomplished in the available time, and not give participants hints about what to do or say. Make sure that facilitators have a lot of practice and constructive feedback from the team to improve their skills.

- Plan how to share the results: It takes time and skill to create an effective user testing report that communicates the test and results well. Even if you have the time and skill, most team members will probably not read the report. Find other ways to share results to those who need them. For example, create a bug list for developers using project management software or a shared online document; have a workshop with the team and stakeholders and present the test and results to them. Have review sessions immediately after test days.

- Iterate: Most user testing is most effective if performed regularly and iteratively; for testing different aspects or parts of the design; for testing solutions based on previous tests; for finding new problems or ideas introduced by the new solutions; for tracking changes to results based on time, seasonality, maturity of product or user base; or for uncovering problems that were previously hidden by larger problems. Many organizations only make provision to test with users once at the end of design, if at all. It is better to split your budget into multiple tests if possible.

As we explore usability testing, each of these guidelines will be addressed more concretely.

Planning and conducting usability tests

Before starting, let’s look at what we mean by a usability test, and describe the different types.

Team members who watch participants struggle to use their product are often shocked that they had not noticed the glaringly obvious design problems that are revealed. In later iterations, usability tests should reveal fewer or more minor problems, which provides proof of the success of a design before launch. Apart from glaring problems, how do we know what makes a design successful? The definition of usability by the International Organization for Standardization (ISO) is: Extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency and satisfaction in a specified context of use. This definition shows us the kind of things that make a successful design.

From this definition, usability comprises:

- Effectiveness: How completely and accurately the required tasks can be accomplished.

- Efficiency: How quickly tasks can be performed.

- Satisfaction: How pleasant and enjoyable the task is. This can become a delight if a design pleases us in unexpected ways.

There are three additional points that arise from the preceding points:

- Discoverability: How easy it is to find out how to use the product the first time.

- Learnability: How easy it is to continue to improve using the product, and remember how to use it.

- Error proneness: How well the product prevents errors and helps users recover. This equates to the number and severity of errors that users experience while doing tasks.

These six points provide us with guidance on the kinds of tasks we should be designing and the kind of observations we should be making when planning a usability test.

There are three ways of gathering data in a usability test–using metrics to guide quantitative measurement, observing, and asking questions. The most important is observation. Metrics allow comparison, guide observation, and help us design tasks, but they are not as important as why things happen. We discover why by observing interactions and emotional responses during task performance.

In addition, we must be very careful when assigning meaning to quantitative metrics because of the small numbers of users involved in usability tests. Typically, usability tests are conducted with about five participants. This number has been repeatedly shown to be most effective when considering testing costs against the number of problems uncovered. However, it is too small for statistical significance testing, so any numbers must be reported carefully.

If we consider observation against asking questions, usability tests are about doing things, not discussing them. We may ask users to talk aloud while doing tasks to help us understand what they are thinking, but we need the context of what they are doing.

This means that usability tests trump questionnaires and surveys. It also means that people are notoriously bad at remembering what they did or imagining what they will do. It does not mean that we never listen to what users say, as there is a lot of value to be gained from a well-formed question asked at the right time. We must just be careful about how we understand it. We need to interpret what people say within the context of how they say it, what they are doing when they say it, and what their biases might be. For example, users tend to tell researchers what they think we want to hear, so any value judgment will likely be more positive than it should. This is called experimenter bias.

Despite the preceding cautions, all three methods are useful and increase the value of a test. While observation is core, the most effective usability tests include tasks carefully designed around metrics, and begin and end with a contextual interview with the user. The interviews help us to understand the user’s previous and current experiences, and the context in which they might use the website in their own lives.

Planning a usability test can seem like a daunting task. There are so many details to work out and organize, and they all need to come together on the day(s) of the test. The following diagram is a flowchart of the usability test process. Each of the boxes represents a different area that must be considered or organized:

However, by using these areas to break the task down into logical steps and keeping a checklist, the task becomes manageable.

Planning usability tests

In designing and planning a usability test, you need to consider five broad questions:

- What: What are the objectives, scope, and focus of the test? What fidelity are you testing?

- How: How will you realize the objectives? Do you need permissions and sign off? What metrics, tasks, and questions are needed? What are the hardware and software requirements? Do you need a prototype? What other materials will you need? How will you conduct the test?

- Who: How many participants and who will they be? How will you recruit them? What are the roles needed? Will team members, clients, and stakeholders attend? Will there be a facilitator and/or a notetaker?

- Where: What venue will you use? Is the test conducted in an internal or external lab, on the streets/in coffee shops, or in users’ homes/work?

- When: What is the date of the test? What will the schedule be? What is the timing of each part?

Documenting these questions and their answers forms your test plan. The following figure illustrates the thinking around each of these broad questions:

Designing the test – formulating goals and structure

The first thing to consider when planning a usability test is its goal. This will dictate the test scope, focus, and tasks and questions. For example, if your goal is a general usability test of the whole website, the tasks will be based on the business reasons for the site. These are the most important user interactions. You will ask questions about general impressions of the site. However, if your goal is to test the search and filtering options, your tasks will involve finding things on the website. You will ask questions about the difficulty of finding things. If you are not sure what the specific goal of the usability test might be, think about the following three points:

- Scope: Do you want to test part of the design, or the whole website?

- Focus: Which area of the website will you focus on? Even if you want to test the whole website, there will be areas that are more important. For example, checkout versus contact page.

- Behavioral questions: Are there questions about how users behave, or how different designs might impact user behavior, that are being asked within the organization?

Thinking about these questions will help you refine your test goals.

Once you have the goals, you can design the structure of the test and create a high-level test plan. When deciding on how many tests to conduct in a day and how long each test should be, remember moderator and user fatigue. A test environment is a stressful situation. Even if you are testing with users in their own home, you are asking them to perform unfamiliar tasks with an unfamiliar system. If users become too tired, this will affect test results negatively. Likewise, facilitating a test is tiring as the moderator must observe and question the user carefully, while monitoring things like the time, their own language, and the script.

Here are details to consider when creating a schedule for test sessions:

- Test length: Typically, each test should be between 60 and 90 minutes long.

- Number of tests: You should not be facilitating more than 5-6 tests in one day. When counting the hours, leave at least half an hour cushioning space between each test. This gives you time to save the recording, make extra notes if necessary, communicate with any observers, and it provides flexibility if participants arrive later or tests run longer than they should.

- Number of tasks: This is roughly the number of tasks you hope to include in the test. In a 60-minute test, you will probably have about 40-45 minutes for tasks. The rest of the time will be taken with welcoming the participant, the initial interview, and closing questions at the end. In 45 minutes, you can fit about 5-8 tasks, depending on the nature of the tasks. It is important to remember that less is more in a test. You want to give participants time to explore the website and think about their options. You do not want to be rushing them on to the next task.

The last thing to consider is moderating technique. This is how you interact with the participant and ask for their input. There are two aspects: thinking aloud and probing. Thinking aloud is asking participants to talk about what they are thinking and doing so you can understand what is in their heads. Probing is asking participants ad-hoc questions about interesting things that they do. You can do both concurrently or retrospectively:

- Concurrent thinking aloud and probing: Here, the participant talks while they do tasks and look at the interface. The facilitator asks questions as they come up, while the participant is doing tasks. Concurrent probing interferes with metrics such as time on task and accuracy, as you might distract users. However, it also takes less test time and can deliver more accurate insights, as participants do not have to remember their thoughts and feelings; these are shared as they happen.

- Retrospective thinking aloud and probing: This involves retracing the test or task after it is finished and asking participants to describe what they were thinking in retrospect. The facilitator may note down questions during tasks, and ask these later. While retrospective techniques simulate natural interaction more closely, they take longer because tasks are retraced. This means that the test must be longer or there will be fewer tasks and interview questions. Retrospective techniques also require participants to remember what they were thinking previously, which can be faulty.

Concurrent moderating techniques are preferable because of the close alignment between users acting and talking about those actions. Retrospective techniques should only be used if timing metrics are very important. Even in these cases, concurrent thinking aloud can be used with retrospective probing. Thinking aloud concurrently generally interferes very little with task times and accuracy, as users are ideally just verbalizing ideas already in their heads.

At each stage of test planning, share the ideas with the team and stakeholders and ask for feedback. You may need permission to go forward with test objectives and tasks. However, even if you do not need sign off, sharing details with the team gets everyone involved in the testing. This is a good way to share and promote design values. It also benefits the test, as team members will probably have good ideas about tasks to include or elements of the website to test that you have not considered.

Designing tasks and metrics

As we have stated previously, usability testing is about watching users interacting with a product. Tasks direct the interactions that you want to see. Therefore, they should cover the focus area of the test, or all important interactions if the whole website is tested.

To make the test more natural, if possible create scenarios or user stories that link the tasks together so participants are performing a logical sequence of activities. If you have scenarios or task analyses from previous research, choose those that relate to your test goals and focus, and use them to guide your task design. If not, create brief scenarios that cover your goals. You can do this from a top-down or bottom-up perspective:

- Top down: What events or conditions in their world would motivate people to use this design? For example, if the website is a used goods marketplace, a potential user might have an item they want to get rid of easily, while making some money; or they might need an item and try to get it cheaply secondhand. Then, what tasks accomplish these goals?

- Bottom up: What are the common tasks that people do on the website? For example, in the marketplace example, common tasks are searching for specific items; browsing through categories of items; adding an item to the site to sell, which might include uploading photographs or videos, adding contact details and item descriptions. Then, create scenarios around these tasks to tie them together.

Tasks can be exploratory and open-ended, or specific and directed. A test should have both. For example, you can begin with an open-ended task, such as examining the home page and exploring the links that are interesting. Then you can move onto more directed tasks, such as finding a particular color, size, and brand of shoe and adding it to the checkout cart. It is always good to begin with exploratory tasks, but these can be open-ended or directed.

For example, to gather first impressions of a website, you could ask users to explore as they prefer from the home page and give their impressions as they work; or you could ask users to look at each page for five seconds, and then write down everything they remember seeing. The second option is much more controlled, which may be necessary if you want more direct comparison between participants, or are testing with a prototype where only parts of the website are available.

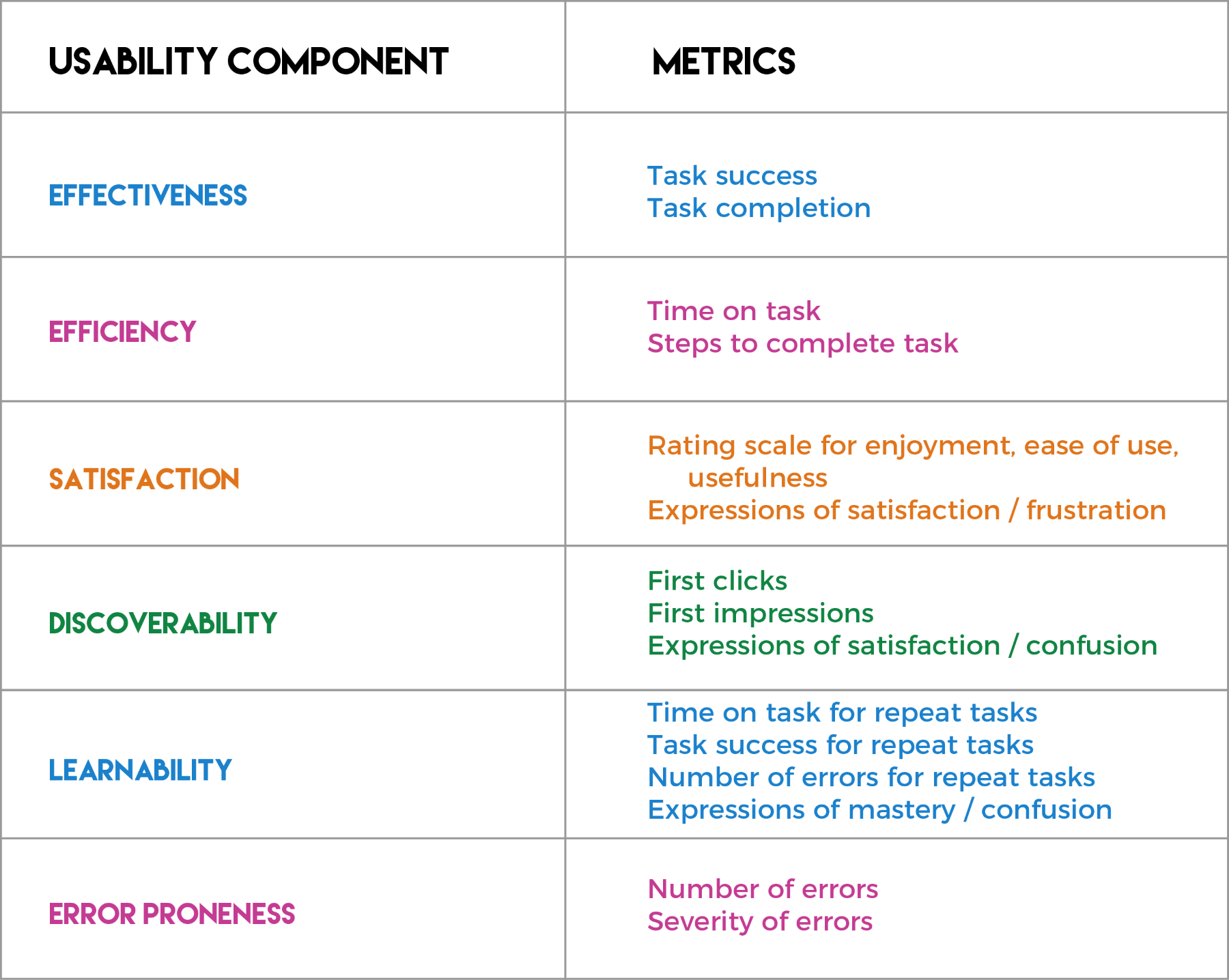

Metrics are needed for task, observation, and interview analysis, so that we can evaluate the success of the design we are testing. They guide how we examine the results of a usability test. They are based on the definition of usability, and so relate to effectiveness, efficiency, satisfaction, discoverability, learnability, and error proneness. Metrics can be qualitative or quantitative. Qualitative metrics aim to encode the data so that we can detect patterns and trends in it, and compare the success of participants, tasks, or tests.

For example, noting expressions of delight or frustration during a task. Quantitative metrics collect numbers that we can manipulate and compare against each other or benchmarks. For example, the number of errors each participant makes in a task. We must be careful how we use and assign meaning to quantitative metrics because of the small sample sizes.

Here are some typical metrics:

- Task success or completion rates: This measures effectiveness and should always be captured as a base. It relates most closely to conversion, which is the primary business goal for a website, whether it is converting browsers to buyers, or visitors to registered users. You may just note success or failure, but it is more revealing to capture the degree of task success. For example, you can specify whether the task is completed easily, with some struggle, with help, or is not completed successfully.

- Time on task: A measure of efficiency. How long it takes to complete tasks.

- Errors per task: A measure of error-proneness. The number and severity of errors per task, especially noting critical errors where participants may not even realize they have made a mistake.

- Steps per task: A measure of efficiency. A number of steps or pages needed to complete each task, often against a known minimum.

- First click: A measure of discoverability. Noting the first click to accomplish each task, to report on findability of items on the web page. This can also be used in more exploratory tasks to judge what attracts the user’s attention first.

When you have designed tasks, consider them against the definition of usability to make sure that you have covered everything that you need or want to cover. The preceding diagram shows the metrics typically associated with each component of the usability definition.

A valid criticism of usability testing is that it only tests first-time use of a product, as participants do not have time to become familiar with the system. There are ways around this problem. For example, certain types of task, such as search and browsing, can be repeated with different items. In later tasks, participants will be more familiar with the controls. The facilitator can use observation or metrics such as task time and accuracy to judge the effect of familiarity. A more complicated method is to conduct longitudinal tests, where participants are asked to return a few days or a week later and perform similar tasks. This is only reasonable to spend time and money on if learnability is an important metric.

Planning questions and observation

The interview questions that are asked at the beginning and end of a test provide valuable context for user actions and reactions, such as the user’s background, their experiences with similar websites or the subject-area, and their relationship to technology. They also help the facilitator to establish rapport with the user.

Other questions provide valuable qualitative information about the user’s emotional reaction to the website and the tasks they are doing. A combination of observation and questions provides data on aspects such as ease of use, usefulness, satisfaction, delight, and frustration.

For the initial interview, questions should be about:

- Welcome: These set the participant at ease, and can include questions about the participant’s lifestyle, job, and family. These details help UX practitioners to present test participants as real people with normal lives when reporting on the test.

- Domain: These ask about the participant’s experience with the domain of the website. For example, if the website is in the domain of financial services, questions might be around the participant’s banking, investments, loans, and their experiences with other financial websites. As part of this, you might investigate their feelings about security and privacy.

- Tech: These questions ask about the participant’s usage and experience with technology. For example, for testing a website on a computer, you might want to know how often the participant uses the internet or social media each day, what kinds of things they do on the internet, and whether they buy things online. If you are testing mobile usage, you might want to inquire about how often the participant uses the internet on their phone each day, and what kind of sites they visit on mobile versus desktop.

Like tasks, questions can be open-ended or closed. An example of an open-ended question is: Tell me about how you use your phone throughout a normal workday, beginning with waking up in the morning and ending with going to sleep at night. The facilitator would then prompt the participant for further details suggested during the reply. A closed question might be: What is your job? These generate simple responses, but can be used as springboards into deeper answers. For example, if the answer is fireman, the facilitator might say, That’s interesting. Tell me more about that. What do you do as a fireman?

Questions asked at the end of the test or during the test are more about the specific experience of the website and the tasks. These are often made more quantifiable by using a rating scale to structure the answer. A typical example is a Likert scale, where participants specify their agreement or disagreement with a statement on a 5- to 7-point scale. For example, a statement might be: I can find what I want easily using this website. #1 is labeled Strongly Agree and #7 is labelled Strongly Disagree. Participants choose the number that corresponds to the strength of their agreement or disagreement. You can then compare responses between participants or across different tests.

Examples of typical questions include:

- Ease of use (after every task): On a scale of 1-7, where 1 is really hard and 7 is really easy, how difficult or easy did you find this task?

- Ease of use (at the end): On a scale of 1-7, how easy or difficult did you find working on this website?

- Usefulness: On a scale of 1-7, how useful do you think this website would be for doing your job?

- Recommendation: On a scale of 1-7, how likely are you to recommend this website to a friend?

The final questions you ask provide closure for the test and end it gracefully. These can be more general and conversational. They might deliver useful data, but that is not the priority. For example, What did you think of the website? or Is there anything else you’d like to say about the website?

Questions during the test often arise ad hoc because you do not understand why the participant does an action, or what they are thinking about if they stare at a page of the website for a while. You might also want to ask participants what they expect to find before they select a menu item or look at a page.

In preparing for observation, it is helpful to make a list of the kinds of things you especially want to observe during the test. Typical options are:

- Reactions to each new page of the website

- First reactions when they open the Home page

- The variety of steps used to complete each task

- Expressions of delight or frustration

- Reactions to specific elements of the website

- First clicks for each task

- First click off the Home page

Much of what you want to observe will be guided by the usability test objectives and the nature of the website.

Preparing the script

Once you have designed all the elements of the usability test, you can put them together in a script. This is a core document in usability testing, as it acts as the facilitator’s guide during each test. There are different ideas about what to include in a script. Here, we describe a comprehensive script that describes the whole test procedure. This includes, in rough order:

- The information that must be told to the participant in the welcome speech. The welcome speech is very important, as it is the participant’s first experience of the facilitator. It is where the rapport will first be established. The following information may need to be included:

- Introduction to the facilitator, client, and product.

- What will happen during the test, including the length.

- The idea that the website is being tested, not the participant, and that any problems are the fault of the product. This means the participant is valuable and helpful to the team developing a great website.

- Asking the participant to think aloud as much as possible, and to be honest and blunt about what they think. Asking them to imagine that they are at home in a natural situation, exploring the website.

- If there are observers, indication that people may be watching and that they should be ignored.

- Asking permission to record the session, and telling the participant why. Assuring them of their privacy and the limited usage of the recordings to analysis and internal reporting.

- A list of any documents that the participant must look at or sign first, for example, an NDA.

- Instructions on when to switch on and off any recording devices.

- The questions to ask in thematic sections, for example, welcome, domain, and technology. These can include potential follow – on questions, to delve for more information if necessary.

- A task section, that has several parts:

- An introduction to the prototype if necessary. If you are testing with a prototype, there will probably be unfinished areas that are not clickable. It is worth alerting participants so they know what to expect while doing tasks and talking aloud.

- Instructions on how to use the technology if necessary. Ideally your participants should be familiar with the technology, but if this is not the case, you want to be testing the website, not the technology. For example, if you are testing with a particular screen reader and the participant has not used it before, or if you are using eye tracking technology.

- An introduction to the tasks, including any scenarios provided to the participant.

- The description of each task. Be careful not to use words from the website interface when describing tasks, so you do not guide the participant too much. For example, instead of: How would you add this item to your cart?, say How would you buy this item?

- Questions to include after each task. For example, the ease of use question.

- Questions to prompt the participant if they are not thinking aloud when they should, especially for each new page of the website or prototype. For example: What do you see here? What can you do here? What do you think these options mean?

- Final questions to finish off the test, and give the participant a chance to emphasize any of their experiences.

- A list of any documents the participant must sign at the end, and instructions to give the incentive if appropriate.

Once the script is created, timing is added to each task and section, to help the facilitator make sure that the tests do not run over time. This will be refined as the usability test is practiced.

The script provides a structure to take notes in during the test, either on paper or digitally:

- Create a spreadsheet with rows for each question and task

- Use the first column for the script, from the welcome questions onwards

- Capture notes in subsequent columns for the user

- Use a separate spreadsheet for each participant during the test

- After all the tests, combine the results into one spreadsheet so you can easily analyze and compare

The following is a diagram showing sections of the script for notetaking, with sample questions and tasks, for a radio station website:

Securing a venue and inviting clients and team members

If you are testing at an external venue, this is one of the first things you will need to organize for a usability test, as these venues typically need to be booked about one-two months in advance. Even if you are testing in your own offices, you will still need to book space for the testing.

When considering a test venue, you should be looking for the following:

- A quiet, dedicated space where the facilitator, participant, and potentially a notetaker, can sit. This needs surfaces for all the equipment that will be used during the test, and comfortable space for the participant. Consider the lighting in the test room. This might cause glare if you are testing on mobile phones, so think about how best to handle the glare. For example, where the best place is for the participant to sit, and whether you can use indirect lighting of some kind.

- A reception room where participants can wait for their testing session. This should be comfortable. You may want to provide refreshments for participants here.

- Ideally, an observation room for people to watch the usability tests. Observers should never be in the same space as the testing, as this will distract participants, and probably make them uncomfortable. The observation room should be linked to the test room, either with cables or wirelessly, so observers can see what is happening on the participant’s screen, and hear (and ideally see) the participant during the test. Some observation rooms have two-way mirrors into the test room, so observers can watch the facilitator and participant directly. Refreshments should be available for the observers.

We have discussed various testing roles previously. Here, we describe them formally:

- Facilitator: This is the person who conducts the test with the participant. They sit with the participant, instruct them in the tasks and ask questions, and take notes. This is the most important role during the test. We will discuss it further in the Conducting usability tests section.

- Participant: This is the person who is doing the test. We will discuss recruiting test participants in the next section.

- Notetaker: This is an optional role. It can be worth having a separate notetaker, so the facilitator does not have to take notes during the test. This is especially the case if the facilitator is inexperienced. If there is a notetaker, they sit quietly in the test room and do not engage with the participant, except when introduced by the facilitator.

- Receptionist: Someone must act as receptionist for the participants who arrive. This cannot be the facilitator, as they will be in the sessions. Ask a team member or the office receptionist to take this role.

- Observers: Everyone else is an observer. These can be other team members and/or clients. Observers should be given guidelines for their behavior. For example, they should not interact with test participants or interrupt the test. They watch from a separate room, and should not be too noisy so that they can be heard in the test room (often these rooms are close to each other). The facilitator should discuss the tests with observers between sessions, to check if they have any questions they would like added to the test, and to discuss observations. It is worth organizing a debriefing for immediately after the tests, or the next day if possible, for the observers and facilitator to discuss the tests and observations.

It is important that as many stakeholders as possible are persuaded to watch at least some of the usability testing. Watching people using your designs is always enlightening, and really helps to bring a team together. Remember to invite clients and team members early, and send reminders closer to the day.

Recruiting participants

When recruiting participants for usability tests, make sure that they are as close as possible to your target audience. If your website is live and you have a pool of existing users, then your job is much easier. However, if you do not have a user pool, or you want to test with people who have not used your site, then you need to create a specification for appropriate users that you can give to a recruiter or use yourself.

To specify your target audience, consider what kinds of people use your website, and what attributes will cause them to behave differently to other users. If you have created personas during previous research, use these to help identify target user characteristics. If you are designing a website for a client, work with them to identify their target users. It is important to be specific, as it is difficult to look for people who fulfill abstract qualities.

For example, instead of asking for tech savvy people, consider what kinds of technology such people are more likely to use, and what their activities are likely to be. Then ask for people who use the technology in the ways you have identified. Consider the behaviors that result from certain types of beliefs, attitudes, and lifestyle choices. The following are examples of general areas you should consider:

- Experience with technology: You may want users who are comfortable with technology or who have used specific technology, for example, the latest smartphones, or screen readers. Consider the properties that will identify these people. For example, you can specify that all participants must own a specific type or generation of mobile device, and must have owned it for at least two months.

- Online experience: You may want users with a certain level and frequency of internet usage. To elicit this, you can specify that you want people who have bought items online within the last few months, or who do online banking, or have never done these things.

- Social media presence: Often, you want people who have a certain amount of social media interaction, potentially on specific platforms. In this case you would specify that they must regularly post to or read social media such as Facebook, Twitter, Instagram, Snapchat, or more hobbyist versions such as Pinterest and/or Flickr.

- Experience with the domain: Participants should not know too much or too little about the domain. For example, if you are testing banking software, you may want to exclude bank employees, as they are familiar with how things work internally.

- Demographics: Unless your target audience is very skewed, you probably want to recruit a variety of people demographically. For example, a range of ages, gender ethnicity, economic, and education levels.

There may be other characteristics you need in your usability test participants. The previous characteristics should give you an idea of how to specify such people. For example, you may want hobbyist photographers. In this case, you would recruit people who regularly take photographs and share them with friends in some way. Do not use people who you have previously used in testing, unless you specifically need people like this, as they will be familiar with your tests and procedures, which will interfere with results.

Recruiting takes time and is difficult to do well. There are various ways of recruiting people for user testing, depending on your business. You may be able to use people or organizations associated with your business or target audience members to recruit people using the screening questions and incentives that you give them. You can set up social media lists of people who follow your business and are willing to participate. You can also use professional recruiters, who will get you exactly the kinds of people you need, but will charge you for it.

For most tests, an incentive is usually given to thank participants for their time. This is often money, but it can also be a gift, such as stationery or gift certificates.

A recruitment brief is the document that you give to recruiters. The following are the details you need to include:

- Day of the test, the test length, and the ideal schedule. This should state the times at which the first and last participants may be scheduled, how long each test will take, and the buffer period that should be between each session.

- The venue. This should include an address, maps, parking, and travel information.

- Contact details for the team members who will oversee the testing and recruitment.

- A description of the test that can be given to participants.

- The incentives that will be provided.

- The list of qualities you need in participants, or screening questions to check for these.

This document can be modified to share with less formal recruitment associates. The benefit of recruiters is that they handle the whole recruitment process. If you and your team recruit participants yourselves, you will need to remind them a week before the test, and the day before the test, usually by messaging or emailing them. On the day of the test, phone participants to confirm that they will be arriving, and that they know how to get to the venue. Participants still often do not attend tests, even with all the reminders. This is the nature of testing with real people. Ideally you will be given some notice, so try to recruit an extra couple of possible participants who you can call in a pinch on the day.

Setting up the hardware, software, and test materials

Depending on the usability test, you will have to prepare different hardware, software, and test materials. These include screen recording software and hardware, notetaking hardware, the prototype to test, screen sharing options, and so on.

The first thing to consider is the prototype, as this will have implications for hardware and software. Are you testing a live website, an electronic prototype, or a paper prototype?

- Live website: Set up any accounts or passwords that may be necessary. Make sure you have reliable access to the internet, or a way to cache the website on your machine if necessary.

- Electronic prototype: Make sure the prototype works the way it is supposed to, and that all the parts that are accessed during the tasks can be interacted with, if required. Try not to make it too obvious which parts work and which parts do not work, as this may guide participants to the correct actions during the test. Be prepared to talk participants through parts of the prototype that do not work, so they have context for the tasks. Have a safe copy of the prototype in case this copy becomes corrupted in some way.

- Paper prototype: Make sure that you have sketches or printouts of all the screens that you need to complete the tasks. With paper prototype testing, the facilitator takes the role of the computer, shows the results of the actions that the participant proposes, and talks participants through the screens. Make sure that you are prepared for this and know the order of the screens. Have multiple copies of the paper prototype in case parts get lost or destroyed.

For any of the three, make sure the team goes through the test tasks to make sure that everything is working the way it should be.

For hardware and other software, keep an equipment list, so you can check it to make sure you have all the necessary hardware with you. You may need to include:

- Hardware for participant to interact with the prototype or live site: This may be a desktop, laptop, or mobile device. If testing on a mobile device, you can ask participants to use their own familiar phones instead of an unfamiliar test mobile device. However, participants may have privacy issues with using their own phones, and you will not be able to test the prototype or live site on the phone beforehand. If you provide a laptop, include a separate mouse as people often have difficulty with unfamiliar mouse pads.

- Recording the screen and audio: This is usually screen capture software. There are many options for screen capturing software, such as Lookback, an inexpensive option for iOS and Android, and CamStudio, a free option for the PC. Specialist software that handles multiple camera inputs allows you to record face and screen at the same time. Examples are iSpy, free CCTV software, Silverback, an inexpensive option for the Mac, and Morae, an expensive but impressive option for the PC.

- Mobile recording alternative: You can also record mobile video with an external camera that captures the participant’s fingers on screen. This means you do not have to install additional software on the phone, which might cause performance problems. In this case, you would use a document camera attached to the table, or a portable rig with a camera containing the phone and attached to a nearby PC. The video will include hesitations and hovering gestures, which are useful for understanding user behavior, but fingers might occlude the screen. In addition, rigs may interfere with natural usage of the mobile phone, as participants must hold the rig as well as the phone.

- Observer viewing screen: This is needed if there are observers. The venue might have screen sharing set up; if not, you will have to bring your own hardware and software. This could be an external monitor and extension cables to connect to a laptop in the interview room. It could also be screen sharing software, for example, join.me.

- Capturing notes: You will need a method to capture notes. Even if you are screen recording, notes will help you to review the recordings more efficiently, and remind you about parts of the recording you wanted to pay special attention to. One method is using a tablet or laptop and spreadsheet. Typing is fast and the electronic notes are easy to put together after the tests. An alternative is paper and pencil. The benefit of this is that it is least disruptive to the participant. However, these notes must be captured electronically.

- Camera for participant face: Capturing the participant’s face is not crucial. However, it provides good insight into their feelings about tasks and questions. If you don’t record face, you will only have tone of voice and the notes that were taken to remind you. Possible methods are using a webcam attached to the computer doing screen recording, or using inbuilt software such as Hangouts, Skype, or FaceTime for Apple devices.

- Microphone: Often sound quality is not great on screen capturing software, because of feedback from computer equipment. Using an external microphone improves the quality of sound.

- Wireless router: A portable wireless router in case of internet problems (if you are using the internet).

- Extra extension cables and chargers for all devices.

You will also need to make sure that you have multiple copies of all documents needed for the testing. These might include:

- Consent form: When you are testing people, they typically need to give their permission to be tested. You also typically need proof that the incentive has been received by the participant. These are usually combined into a form that the participant signs to give their permission and acknowledge receipt of the incentive.

- Non-disclosure agreement (NDA): Many businesses require test participants to sign NDAs before viewing the prototype. This must be signed before the test begins.

- Test materials: Any documents that provide details to the participants for the test.

- Checklists: It is worth printing out your checklists for things to do and equipment, so that you can check them off as you complete actions, and be sure that you have done everything by the time it needs to be done.

The following figure shows a basic sample checklist for planning a usability test. For a more detailed checklist, add in timing and break the tasks down further. These refinements will depend on the specific usability test. Where you are uncertain about how long something will take, overestimate. Remember that once you have fixed the day, everything must be ready by then.

Conducting usability tests

On the day(s) of the usability test, if you have planned properly, all you should have to worry about are the tests themselves, and interacting with the participants. Here is a list of things to double-check on the day of each test:

- Before the first test:

- Set up and check equipment and rooms.

- Have a list of participants and their order.

- Make sure there are refreshments for participants and observers.

- Make sure you have a receptionist to welcome participants.

- Make sure that the prototype is installed or the website is accessible via the internet and working.

- Test all equipment, for example, recording software, screen sharing, and audio in observations room.

- Turn off anything on the test computer or device that might interfere with the test, for example, email, instant messaging, virus scans, and so on. Create bookmarks for any web pages you need to open.

- Before each test:

- Have the script ready to capture notes from a new participant.

- Have the screen recorder ready.

- Have the browser open in a neutral position, for example, Google search.

- Have sign sheets and incentive ready.

- Start screen sharing.

- Reload sample data if necessary, and clear the browser history from the last test.

- During each test:

- Follow the script, including when the participant must sign forms and receive the incentive.

- Press record on the screen recorder.

- Give the microphone to the participant if appropriate.

- After each test:

- Stop recording and save the video.

- Save the script.

- End screen sharing.

- Note extra details that you did not have time for during the session.

Once you have all the details organized, the test session is in the hands of the facilitator.

Best practices for facilitating usability sessions

The facilitator should be welcoming and friendly, but relatively ordinary and not overly talkative. The participant and website should be the focus of the interview and test, not the facilitator. To create rapport with the participant, the facilitator should be an ally. A good way to do this is to make fun of the situation and reassure participants that their experiences in the test will be helpful. Another good technique is to ask more like an apprentice than an expert, so that the participant answers your questions, for example: Can you tell me more about how this works? and What happens next?.

Since you want participants to feel as natural and comfortable as possible in their interactions, the facilitator should foster natural exploration and help satisfy participant curiosity as much as possible. However, they need to remain aware of the script and goals of the test, so that the participant covers what is needed.

Participants often struggle to talk aloud. They forget to do so while doing tasks. Therefore, the facilitator often needs to nudge participants to talk aloud or for information. Here are some useful questions or comments:

- What are you thinking? What do you think about that?

- Describe the steps you’re doing here.

- What’s going on here?

- What do you think will happen next?

- Is that what you expected to happen?

- Can you show me how you would do that?

When you are asking questions, you want to be sure that you help participants to be as honest and accurate as possible. We’ve previously stated that people are notoriously bad at projecting what they will do or remembering what they did. This does not mean that you cannot ask about what people do. You must just be careful about how you ask and always try to keep it concrete. The priorities in asking questions are:

- Now: Participants talking aloud about what they are doing and thinking now.

- Retrospective: Participants talking about what they have done or thought in the past.

- Never prospective: Never ask participants about what they would do in the future. Rather ask about what they have done in similar situations in the past.

Here are some other techniques for ensuring you get the best out of the participants, and do not lead them too much yourself:

- Ask probing questions such as why and how to get to the real reasons for actions. Do not assume you know what participants are going to say. Check or paraphrase if you are not sure what they said or why they said it. For example, So are you saying the text on the left is hard to read? or You’re not sure about what? or That picture is weird? How?

- Do not ask leading questions, as people will give positive answers to please you. For example, do not say Does that make sense?, Do you like that? or Was that easy? Rather say Can you explain how this works? What do you think of that? and How did you find doing that task?

- Do not tell participants what they are looking at. You are trying to find out what they think. For example, instead of Here is the product page, say Tell me what you see here, or Tell me what this page is about.

- Return the question to the participant if they ask what to do or what will happen: I can’t tell you because we need to find out what you would do if you were alone at home. What would you normally do? or What do you think will happen?

- Ask one question at a time, and make time for silence. Don’t overload the participants. Give them a chance to reply. People will often try to fill the silence, so you may get more responses if you don’t rush to fill it yourself.

- Encourage action, but do not tell them what to do. For example, Give it a try.

- Use acknowledgment tokens to encourage action and talking aloud. For example, OK, uh huh, mm hmm.

A good facilitator makes participants feel comfortable and guides them through the tasks without leading while observing carefully and asking questions where necessary. It takes practice to accomplish this well.

The facilitator (and the notetaker if there is one) must also think about the analysis that will be done. Analysis is time-consuming; think about what can be done beforehand to make it easier. Here are some pointers:

- Taking notes on a common spreadsheet with a script is helpful because the results are ready to be combined easily.

- If you are gathering quantitative results, such as timing tasks or counting steps to accomplish activities, prepare spaces to note these on the spreadsheet before the test, so all the numbers are easily accessible afterward.

- If you are rating task completion, then note a preliminary rating as the task is completed. This can be as simple as selecting appropriate cell colors beforehand and coloring each cell as the task is completed. This may change during analysis, but you will have initial guidance.

- Listen for useful and illustrative quotes or video segment opportunities. Note down the quote or roughly note the timestamp, so you know where to look in the recording.

- In general, have a timer at hand, and note the timestamp of any important moments in each test. This will make reviewing the recordings easier and less time-consuming.

We examined how to plan, organize, and conduct a usability test. As part of this, we have discussed how to design a test with goals, tasks, metrics, and questions using the definition of usability.

If you liked this article, be sure to check out this book UX for the Web to make a web app fully accessible from a development and design perspective.

Read Next:

3 best practices to develop effective test automation with Selenium

Unit Testing in .NET Core with Visual Studio 2017 for better code quality

![How to create sales analysis app in Qlik Sense using DAR method [Tutorial] Financial and Technical Data Analysis Graph Showing Search Findings](https://hub.packtpub.com/wp-content/uploads/2018/08/iStock-877278574-218x150.jpg)